Privacy

Categories

Tags

Local LLM Practical Guide - Building a Private AI Environment with Ollama/Llama.cpp

Comprehensive guide to setting up a local LLM environment using Ollama and Llama.cpp. Learn how to balance privacy and cost while leveraging offline AI capabilities with practical techniques.

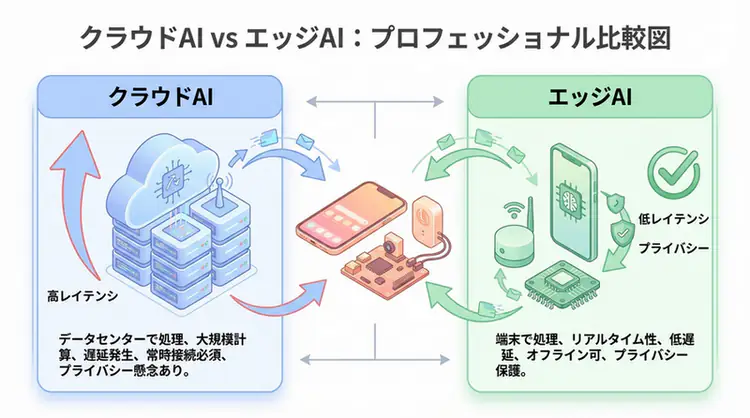

Edge AI Practical Guide - Device Deployment of Small Language Models

Achieve local AI execution on smartphones, IoT, and embedded systems with 1B-8B parameter SLMs like Phi-3, Gemma, and Qwen2. Comprehensive explanation of quantization techniques, privacy protection, and 13ms latency implementation methods.