Langfuse

Categories

Tags

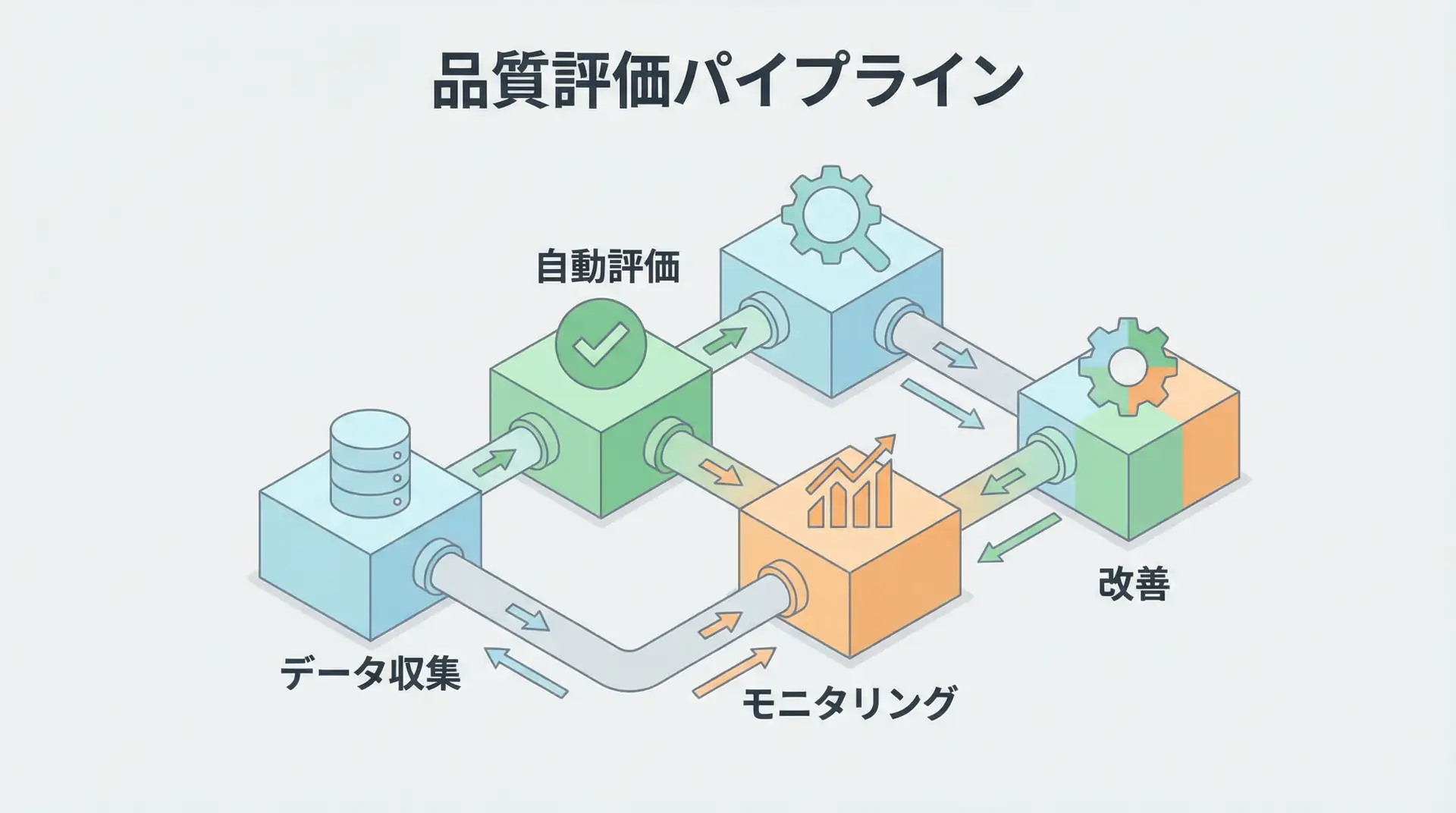

AI Agent Evaluation & Monitoring - Practical Guide to Quantifying Quality and Improving Reliability

The biggest barrier to production deployment of AI agents is 'quality.' This article thoroughly explains a systematic 6-step framework for objectively evaluating AI agent quality and continuously improving it, based on LangChain's latest research, along with practical tools like Maxim AI and Langfuse.

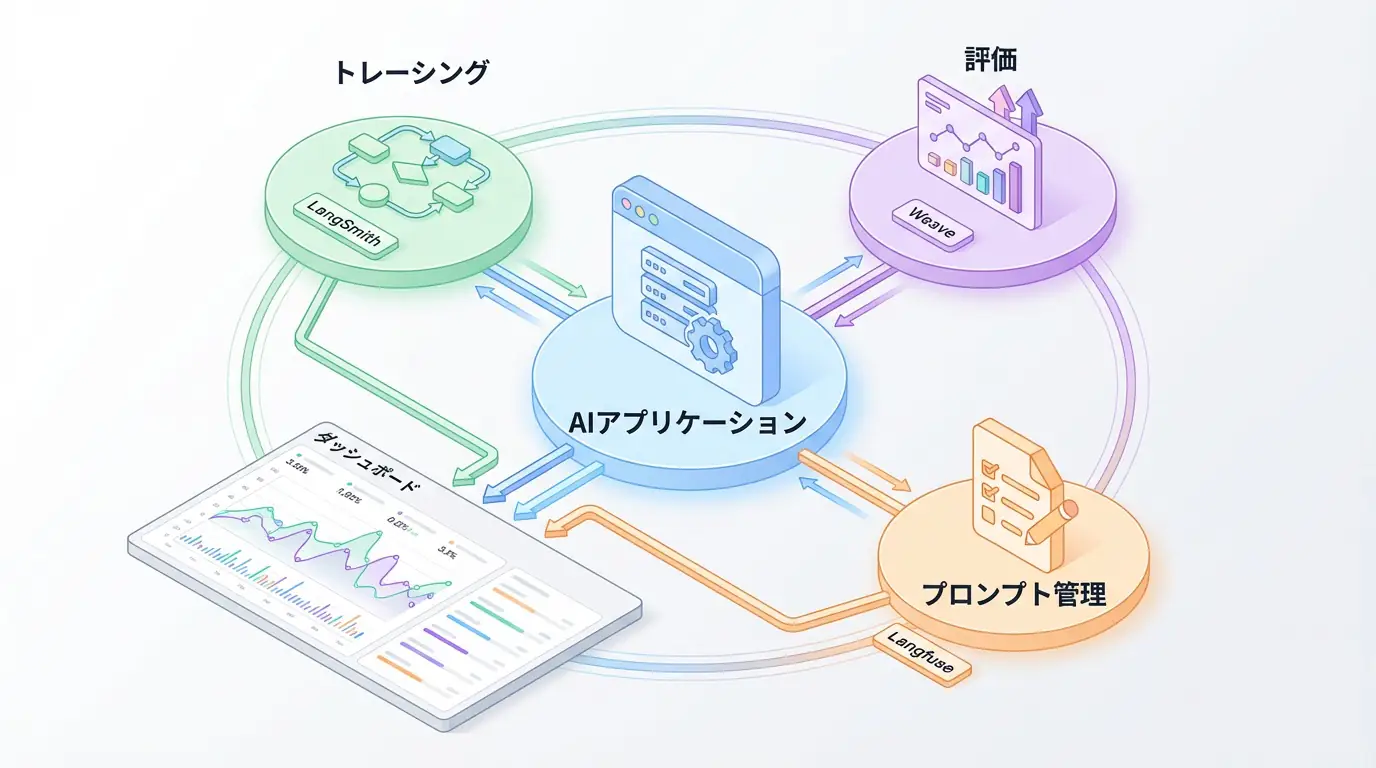

LLMOps & AI Observability Complete Guide - Production Monitoring and Debugging

Comprehensive comparison of major LLMOps/AI Observability tools including LangSmith, Weights & Biases Weave, and Langfuse. Practical guide to optimizing production LLM applications through tracing, evaluation, and prompt management.