What is a Vector Database?

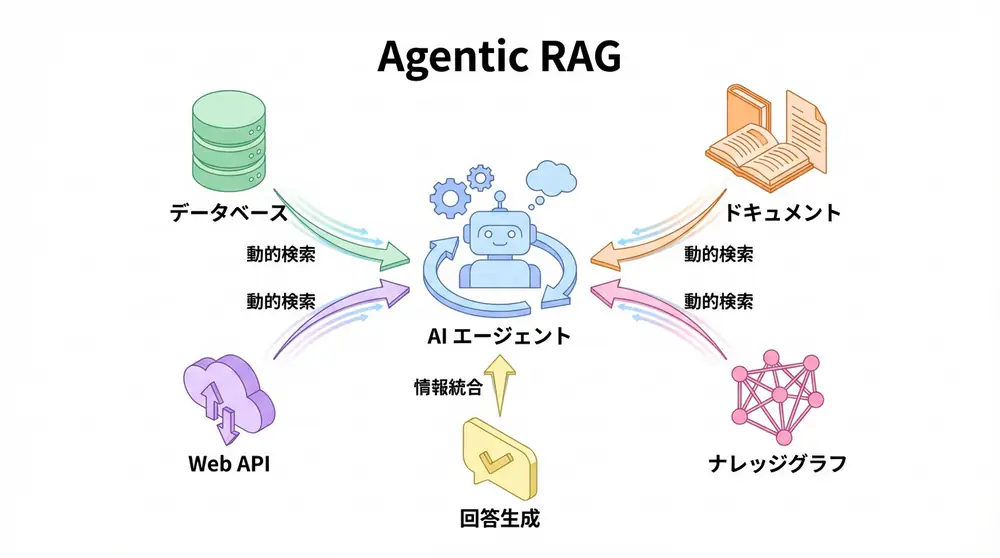

Vector Database is a database optimized for efficiently storing and searching high-dimensional vectors (embeddings). As the core of RAG (Retrieval-Augmented Generation) systems, it’s essential infrastructure for AI applications in 2025.

Why are Vector Databases Needed?

Traditional RDBMS and NoSQL databases are inefficient for cosine similarity calculations between vectors. Vector Databases enable high-speed searches from hundreds of millions to billions of vectors through Approximate Nearest Neighbor (ANN) algorithms.

Major Vector Database Comparison

1. Pinecone - Fully Managed, Enterprise-oriented

Features:

- Fully managed service (no infrastructure management needed)

- Serverless scaling

- Real-time updates and metadata filtering

- 99.99% SLA guarantee (Enterprise plan)

Performance:

- Latency: 30-50ms (P95)

- Throughput: 10,000-20,000 QPS (queries/second)

- Scale: Supports up to billions of vectors

Pricing:

- Starter: Free (100K vectors, 1 Pod)

- Standard: $70/month~ (1M vectors, 1 Pod)

- Enterprise: Custom pricing

Use Cases:

- Companies wanting to avoid infrastructure management

- Services requiring global deployment

- Production environments requiring high availability

Implementation Example:

from pinecone import Pinecone, ServerlessSpec

# Initialize

pc = Pinecone(api_key="your-api-key")

# Create index

pc.create_index(

name="product-search",

dimension=1536, # OpenAI ada-002

metric="cosine",

spec=ServerlessSpec(cloud="aws", region="us-east-1")

)

# Add vectors

index = pc.Index("product-search")

index.upsert(vectors=[

("id1", [0.1, 0.2, ...], {"category": "electronics"}),

("id2", [0.3, 0.4, ...], {"category": "fashion"})

])

# Search

results = index.query(

vector=[0.15, 0.25, ...],

top_k=10,

filter={"category": {"$eq": "electronics"}}

)2. Qdrant - Rust-based, High Performance

Features:

- Ultra-fast processing through Rust implementation

- Both open source & cloud managed supported

- Advanced filtering capabilities (payload search)

- Easy self-hosting with Docker/Kubernetes

Performance:

- Latency: 30-40ms (P95)

- Throughput: 8,000-15,000 QPS

- Memory efficiency: 30% reduction vs Pinecone

Pricing:

- Free: Self-hosted free

- Cloud: $25/month~ (1M vectors)

- Enterprise: Custom pricing

Use Cases:

- Startups prioritizing cost efficiency

- Projects requiring customization

- Companies wanting data sovereignty through self-hosting

Implementation Example:

from qdrant_client import QdrantClient, models

# Initialize

client = QdrantClient(url="http://localhost:6333")

# Create collection

client.create_collection(

collection_name="documents",

vectors_config=models.VectorParams(

size=768,

distance=models.Distance.COSINE

)

)

# Add vectors

client.upsert(

collection_name="documents",

points=[

models.PointStruct(

id=1,

vector=[0.1, 0.2, ...],

payload={"text": "Sample document", "category": "tech"}

)

]

)

# Search (with filtering)

results = client.search(

collection_name="documents",

query_vector=[0.15, 0.25, ...],

limit=10,

query_filter=models.Filter(

must=[models.FieldCondition(key="category", match=models.MatchValue(value="tech"))]

)

)3. Weaviate - GraphQL, Multimodal Support

Features:

- Flexible queries with GraphQL API

- Multimodal search (text + images)

- Structured data management through schema definition

- Built-in vectorization modules (Hugging Face, OpenAI integration)

Performance:

- Latency: 50-70ms (P95)

- Throughput: 3,000-8,000 QPS

- Feature: Powerful hybrid search (BM25 + vector)

Pricing:

- Open Source: Free

- Cloud: $25/month~ (Sandbox environment)

- Enterprise: Custom pricing

Use Cases:

- Search systems requiring complex queries

- Multimodal AI (image + text search)

- Integration with knowledge graphs

Implementation Example:

import weaviate

from weaviate.classes import Property, DataType

# Initialize

client = weaviate.connect_to_local()

# Schema definition

client.collections.create(

name="Article",

properties=[

Property(name="title", data_type=DataType.TEXT),

Property(name="content", data_type=DataType.TEXT),

],

vectorizer_config=weaviate.classes.Configure.Vectorizer.text2vec_openai()

)

# Add data (auto-vectorization)

articles = client.collections.get("Article")

articles.data.insert({

"title": "Latest AI Technology Trends",

"content": "In 2025, AI agents are rapidly spreading..."

})

# Hybrid search

results = articles.query.hybrid(

query="AI agents",

alpha=0.5, # Balance between vector search and BM25

limit=10

)4. Milvus - Large-scale, Open Source

Features:

- Open source project developed by Zilliz

- Proven scale with billions of vectors

- Multiple index types (HNSW, IVF, DiskANN)

- GPU acceleration support

Performance:

- Latency: 50-80ms (P95)

- Throughput: 10,000-20,000 QPS (with GPU)

- Scale: Optimized for billions of vectors

Pricing:

- Open Source: Free

- Zilliz Cloud: $50/month~ (pay-as-you-go)

- Enterprise: Custom pricing

Use Cases:

- Ultra-large datasets (1B+ vectors)

- High-speed processing in GPU environments

- Enterprise customization

Implementation Example:

from pymilvus import connections, FieldSchema, CollectionSchema, DataType, Collection

# Connect

connections.connect(host="localhost", port="19530")

# Schema definition

fields = [

FieldSchema(name="id", dtype=DataType.INT64, is_primary=True, auto_id=True),

FieldSchema(name="embedding", dtype=DataType.FLOAT_VECTOR, dim=1536),

FieldSchema(name="text", dtype=DataType.VARCHAR, max_length=65535)

]

schema = CollectionSchema(fields, description="Document embeddings")

collection = Collection(name="documents", schema=schema)

# Create index

collection.create_index(

field_name="embedding",

index_params={"index_type": "HNSW", "metric_type": "IP", "params": {"M": 16, "efConstruction": 256}}

)

# Search

collection.load()

results = collection.search(

data=[[0.1, 0.2, ...]],

anns_field="embedding",

param={"metric_type": "IP", "params": {"ef": 64}},

limit=10

)Performance Comparison Table

| Metric | Pinecone | Qdrant | Weaviate | Milvus |

|---|---|---|---|---|

| Latency (P95) | 30-50ms | 30-40ms | 50-70ms | 50-80ms |

| Throughput (QPS) | 10K-20K | 8K-15K | 3K-8K | 10K-20K (GPU) |

| Scale Limit | Billions | Hundreds of millions | Hundreds of millions | Billions+ |

| Memory Efficiency | Medium | High | Medium | High (with GPU) |

| Ease of Management | ★★★★★ | ★★★☆☆ | ★★★☆☆ | ★★☆☆☆ |

| Cost | High | Medium | Medium | Low (OSS) |

Selection Criteria Flowchart

Start

│

├─ Don't want infrastructure management?

│ ├─ Yes → Pinecone

│ └─ No ↓

│

├─ Budget constraints strict?

│ ├─ Yes → Qdrant (self-hosted)

│ └─ No ↓

│

├─ Multimodal search needed?

│ ├─ Yes → Weaviate

│ └─ No ↓

│

├─ Data scale is 1B+ vectors?

│ ├─ Yes → Milvus

│ └─ No → Qdrant or PineconeBest Practices in RAG Implementation

1. Chunking Strategy

from langchain.text_splitter import RecursiveCharacterTextSplitter

splitter = RecursiveCharacterTextSplitter(

chunk_size=500,

chunk_overlap=50,

separators=["\n\n", "\n", "。", "、", " "]

)

chunks = splitter.split_documents(documents)2. Metadata Filtering

# Qdrant example

results = client.search(

collection_name="documents",

query_vector=query_embedding,

query_filter=models.Filter(

must=[

models.FieldCondition(key="date", range=models.Range(gte="2025-01-01")),

models.FieldCondition(key="language", match=models.MatchValue(value="en"))

]

),

limit=10

)3. Hybrid Search

# Weaviate example

results = collection.query.hybrid(

query="AI agents",

alpha=0.7, # 0=BM25 only, 1=vector search only

limit=10

)Cost Optimization

Monthly Cost Estimate (1M vectors):

- Pinecone: $70-100/month

- Qdrant Cloud: $25-50/month

- Qdrant Self-hosted: $20-30/month (EC2 t3.medium)

- Weaviate Cloud: $25-50/month

- Milvus Zilliz: $50-80/month

Recommendations:

- POC/MVP: Qdrant Cloud (low cost, simple)

- Production: Pinecone (reliability) or Qdrant Self-hosted (cost reduction)

- Large-scale: Milvus (scalability)

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| Pinecone | Vector Search | Fast and scalable fully managed DB | View Details |

| LlamaIndex | Data Connection | Data framework specialized for RAG construction | View Details |

| Unstructured | Data Preprocessing | Clean up PDFs and HTML for LLM | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

FAQ

Q1: Which Vector Database is best for startups?

Qdrant is recommended for its good balance of cost and performance. You can start with the cloud version’s free tier or low-price plans, and migrate to self-hosted as you grow.

Q2: When should I choose Pinecone?

Best when you don’t want to allocate resources to infrastructure management or need enterprise-level reliability (SLA) and support. Fully managed so you can focus on development.

Q3: In what cases should I use Milvus?

Demonstrates power for large-scale systems handling billions of vectors or when GPU-accelerated high-speed search is needed on-premises. May be overkill for small projects.

Summary

Vector Database selection determines RAG system success or failure.

Recommended Selection:

- Startups: Qdrant Cloud

- Enterprise: Pinecone

- Large-scale/GPU: Milvus

- Multimodal: Weaviate

Next Steps:

- Try each DB with small datasets (10K vectors)

- Measure latency and cost

- Conduct load testing before production deployment

NOTE Vector Databases are rapidly evolving in 2025. Regular re-evaluation is recommended.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article’s content, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning costs and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize. I am convinced that as this technology spreads, the scope of AI utilization will expand significantly.

I have introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

💡 Struggling with AI Agent Development or Implementation?

Book a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for Internal Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures