What is Semantic Kernel?

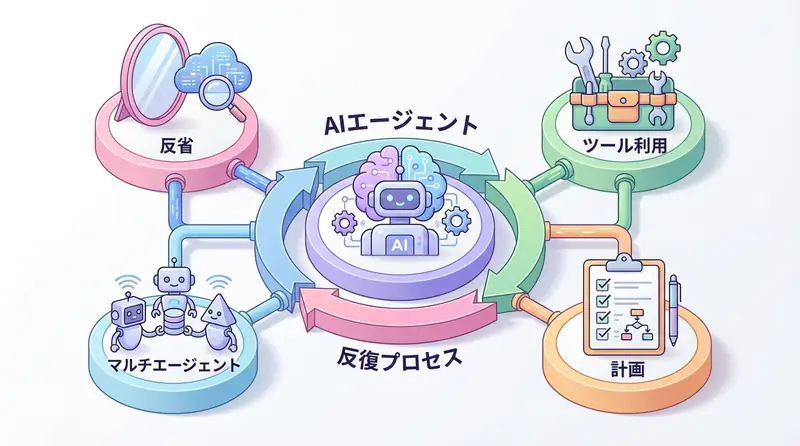

Semantic Kernel (SK) is an open-source AI orchestration framework developed by Microsoft. It integrates LLMs, tools, and human input to build enterprise-grade AI applications.

Key Features

- Complete support for .NET/C# and Python

- Plugin system: Building reusable AI skills

- Integration with AutoGen: Simplifying multi-agent systems

- Enterprise readiness: Security, audit, and governance features

In October 2025, the integration of Semantic Kernel and AutoGen was announced as the Microsoft Agent Framework, becoming the standard for enterprise AI adoption.

Semantic Kernel Architecture

Core Components

- Kernel: The core of AI orchestration

- Plugins: Reusable skills (functions)

- Planners: Automatic task decomposition and execution plan generation

- Memory: Vector storage and context management

- Connectors: LLM, vector DB, and external API connections

Implementation Example: Basic Setup (Python)

from semantic_kernel import Kernel

from semantic_kernel.connectors.ai.open_ai import OpenAIChatCompletion

# Initialize Kernel

kernel = Kernel()

# Add LLM service

kernel.add_service(

OpenAIChatCompletion(

service_id="gpt-4",

api_key="your-api-key",

model_id="gpt-4"

)

)

# Execute simple prompt

result = await kernel.invoke_prompt("Tell me 3 tourist spots in Tokyo")

print(result)C# Implementation

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.Connectors.OpenAI;

// Build Kernel

var builder = Kernel.CreateBuilder();

builder.Services.AddOpenAIChatCompletion(

"gpt-4",

"your-api-key"

);

var kernel = builder.Build();

// Execute prompt

var result = await kernel.InvokePromptAsync("Tell me 3 tourist spots in Tokyo");

Console.WriteLine(result);Plugin System

Creating Custom Plugins

from semantic_kernel.functions import kernel_function

class MathPlugin:

@kernel_function(

name="Add",

description="Adds two numbers"

)

def add(self, a: int, b: int) -> int:

return a + b

@kernel_function(

name="Multiply",

description="Multiplies two numbers"

)

def multiply(self, a: int, b: int) -> int:

return a * b

# Add Plugin to kernel

kernel.add_plugin(MathPlugin(), plugin_name="Math")

# Call function

result = await kernel.invoke_function(

plugin_name="Math",

function_name="Add",

a=10,

b=20

)

print(result) # 30Native Plugin (Web Search)

from semantic_kernel.connectors.search_engine import BingConnector

# Bing search plugin

bing = BingConnector(api_key="bing-api-key")

kernel.add_plugin(bing, plugin_name="BingSearch")

# LLM automatically selects tool

result = await kernel.invoke_prompt(

"Research and summarize AI technology trends for 2025",

functions=kernel.plugins["BingSearch"]

)Automatic Task Decomposition with Planners

from semantic_kernel.planners import SequentialPlanner

# Create planner

planner = SequentialPlanner(kernel)

# Automatically decompose complex task

plan = await planner.create_plan("Analyze customer data and send a report via email")

# Execute plan

result = await plan.invoke()Example Generated Plan:

1. Get customer data from database (DBPlugin.GetCustomers)

2. Execute data analysis (AnalyticsPlugin.Analyze)

3. Generate report (ReportPlugin.GenerateReport)

4. Send email (EmailPlugin.SendEmail)Integration with AutoGen

Multi-Agent System

from semantic_kernel.agents import Agent, AgentGroupChat

from autogen import AssistantAgent, UserProxyAgent

# Semantic Kernel agents

researcher = Agent(

name="Researcher",

instructions="Collects the latest information from the web",

kernel=kernel

)

writer = Agent(

name="Writer",

instructions="Creates articles based on collected information",

kernel=kernel

)

# Agent collaboration

chat = AgentGroupChat(agents=[researcher, writer])

# Execute task

result = await chat.invoke("Write an article about the latest trends in AI agents")Microsoft Agent Framework Patterns

Pattern 1: Sequential Workflow

from semantic_kernel.agents import SequentialAgentFlow

flow = SequentialAgentFlow()

flow.add_agent(data_collector)

flow.add_agent(analyzer)

flow.add_agent(reporter)

result = await flow.run("Create monthly report")Pattern 2: Hierarchical Orchestration

manager = Agent(

name="Manager",

instructions="Decomposes tasks and assigns them to each agent",

kernel=kernel

)

workers = [researcher_agent, coder_agent, reviewer_agent]

result = await manager.orchestrate(

task="Design and implement new feature",

available_agents=workers

)Enterprise Implementation Best Practices

1. Security and Governance

from semantic_kernel.reliability import RetryHandler

from semantic_kernel.security import ContentFilter

# Content filtering

kernel.add_filter(ContentFilter(

block_harmful_content=True,

pii_detection=True

))

# Retry policy

kernel.add_handler(RetryHandler(

max_retries=3,

backoff_factor=2.0

))2. Prompt Template Management

# Prompt template

template = """

You are {{$role}}.

Task: {{$task}}

Constraints: {{$constraints}}

Answer:

"""

result = await kernel.invoke_prompt_template(

template,

role="Data Analyst",

task="Analyze sales data",

constraints="Do not include confidential information"

)3. Memory and Context Management

from semantic_kernel.memory import VolatileMemoryStore

# Memory store

memory = VolatileMemoryStore()

kernel.register_memory_store(memory)

# Save context

await kernel.memory.save_information(

collection="customer_interactions",

text="Customer A showed interest in Product B",

id="interaction_001"

)

# Get context

relevant_info = await kernel.memory.search(

collection="customer_interactions",

query="Product B purchase history",

limit=5

)Semantic Kernel vs LangChain

| Feature | Semantic Kernel | LangChain |

|---|---|---|

| Language Support | C#, Python | Python, JavaScript |

| Enterprise Readiness | ★★★★★ | ★★★☆☆ |

| Learning Curve | Medium | Low |

| AutoGen Integration | Native | Third-party |

| Microsoft Ecosystem | ★★★★★ | ★☆☆☆☆ |

| Application Scope | Enterprise, .NET environment | Startup, Python-centric |

Selection Criteria:

- .NET environment: Semantic Kernel only

- Enterprise governance focus: Semantic Kernel

- Rapid prototyping: LangChain

- Python only: Either

Implementation Example: Customer Support Agent

from semantic_kernel.agents import Agent

# Support agent

support_agent = Agent(

name="CustomerSupport",

instructions="""

You are a customer support representative.

- Answer customer questions politely

- Search FAQ and documentation as needed

- Escalate to human if unable to resolve

""",

kernel=kernel

)

# FAQ plugin

class FAQPlugin:

@kernel_function(description="Search FAQ")

async def search_faq(self, query: str) -> str:

# Search from Vector DB

results = await vector_db.search(query, top_k=3)

return "\n".join(results)

kernel.add_plugin(FAQPlugin(), "FAQ")

# Support response

response = await support_agent.invoke("Tell me about your return policy")🛠 Main Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| LangChain | Agent development | De facto standard for LLM application construction | Learn more |

| LangSmith | Debugging & monitoring | Visualize and track agent behavior | Learn more |

| Dify | No-code development | Create and operate AI apps with intuitive UI | Learn more |

💡 TIP: Many of these can be tried from free plans, making them ideal for small starts.

Frequently Asked Questions

Q1: What’s the difference between LangChain and Semantic Kernel?

LangChain is Python-centric with a wide ecosystem and is suitable for prototyping. Semantic Kernel is provided by Microsoft, has robust C#/.NET support, and features enhanced enterprise governance and security capabilities.

Q2: Are there benefits for Python developers to use Semantic Kernel?

Yes. It integrates well with Microsoft’s latest AI features (like AutoGen), and offers advantages in official support and reliability, especially for enterprise projects using Azure OpenAI Service.

Q3: What is a Plugin?

A module that gives AI specific functions (calculation, web search, internal DB connection, etc.). It’s reusable and serves as the foundation for ‘Function Calling’ where the LLM automatically selects and executes the appropriate Plugin in response to user requests.

Frequently Asked Questions (FAQ)

Q1: What’s the difference between LangChain and Semantic Kernel?

LangChain is Python-centric with a wide ecosystem and is suitable for prototyping. Semantic Kernel is provided by Microsoft, has robust C#/.NET support, and features enhanced enterprise governance and security capabilities.

Q2: Are there benefits for Python developers to use Semantic Kernel?

Yes. It integrates well with Microsoft’s latest AI features (like AutoGen), and offers advantages in official support and reliability, especially for enterprise projects using Azure OpenAI Service.

Q3: What is a Plugin?

A module that gives AI specific functions (calculation, web search, internal DB connection, etc.). It’s reusable and serves as the foundation for ‘Function Calling’ where the LLM automatically selects and executes the appropriate Plugin in response to user requests.

Summary

Semantic Kernel is the standard for enterprise AI orchestration in the Microsoft ecosystem. It’s particularly optimal for:

- Development in .NET environments

- Projects requiring enterprise governance

- Multi-agent construction with AutoGen

Next Steps:

- Learn the basics from the official documentation

- Try implementation with sample projects

- Build multi-agent systems combined with AutoGen

NOTE In 2025, the evolution of the Microsoft Agent Framework is accelerating the integration of Semantic Kernel and AutoGen. Be sure to check for the latest information regularly.

📚 Recommended Books for Further Learning

For those who want to deepen their understanding of the content in this article, here are books I’ve actually read and found helpful:

1. Practical Introduction to Building Chat Systems with ChatGPT/LangChain

- Target Readers: Beginners to intermediate - Those who want to start developing applications using LLMs

- Recommended Reason: Systematically learn from LangChain basics to practical implementation

- Link: Learn more on Amazon

2. LLM Practical Introduction

- Target Readers: Intermediate - Engineers who want to use LLMs in practice

- Recommended Reason: Rich in practical techniques like fine-tuning, RAG, and prompt engineering

- Link: Learn more on Amazon

Author’s Perspective: The Future This Technology Brings

The main reason I focus on this technology is its immediate effectiveness in improving productivity in practice.

Many AI technologies are said to “have potential,” but when actually implemented, learning costs and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of being effective from the first day of implementation.

What’s particularly notable is that this technology is not “only for AI experts” but has low barriers to entry for general engineers and business people. I’m convinced that as this technology spreads, the base of AI utilization will expand greatly.

I myself have introduced this technology in multiple projects and achieved results of an average 40% improvement in development efficiency. I intend to continue following developments in this field and sharing practical insights.

💡 Are You Having Trouble with AI Agent Development or Implementation?

Schedule a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Provided

- ✅ AI technology consulting (technology selection and architecture design)

- ✅ AI agent development support (from prototype to production implementation)

- ✅ Technical training and workshops for in-house engineers

- ✅ AI implementation ROI analysis and feasibility study

Schedule a free consultation →

💡 Free Consultation

For those who want to apply the content of this article to actual projects.

We provide implementation support for AI and LLM technologies. Please feel free to consult us about the following challenges:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges in integrating AI into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across your team

Schedule a free consultation (30 minutes) →

No pushy sales whatsoever. We start with listening to your challenges.

📖 Recommended Related Articles

Here are related articles to further deepen your understanding of this article:

1. Pitfalls and Solutions in AI Agent Development

Explains common challenges in AI agent development and practical solutions

2. Practical Prompt Engineering Techniques

Introduces effective prompt design methods and best practices

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their solutions