Introduction: The Birth of “Thinking” AI

“AI is smart, but weak at complex reasoning” “It can solve math problems but can’t explain why it thought that way”

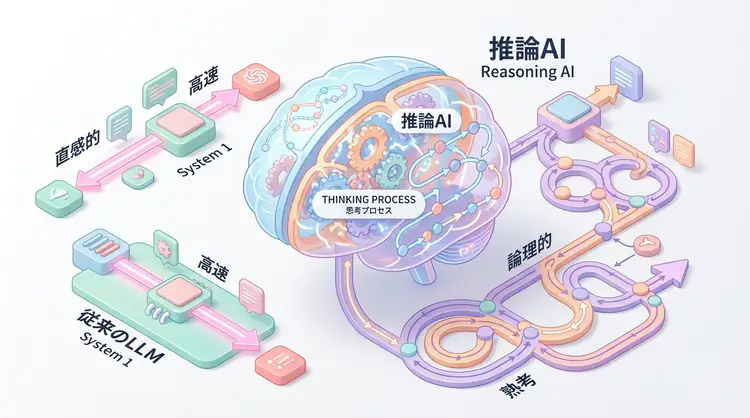

Conventional LLMs (Large Language Models) generate answers through “pattern matching” learned from vast amounts of data. However, they had limitations with multi-step logical reasoning and problems requiring deep thinking.

In September 2024, OpenAI’s announcement of o1 (oh-one) changed this situation. o1 is a next-generation model called a “Reasoning Model” that implements a deep thinking process called System 2 thinking.

This article explains the mechanism of reasoning AI, its differences from conventional LLMs, and practical usage methods.

System 1 vs System 2: Human Thinking Models in AI

What are System 1 and System 2?

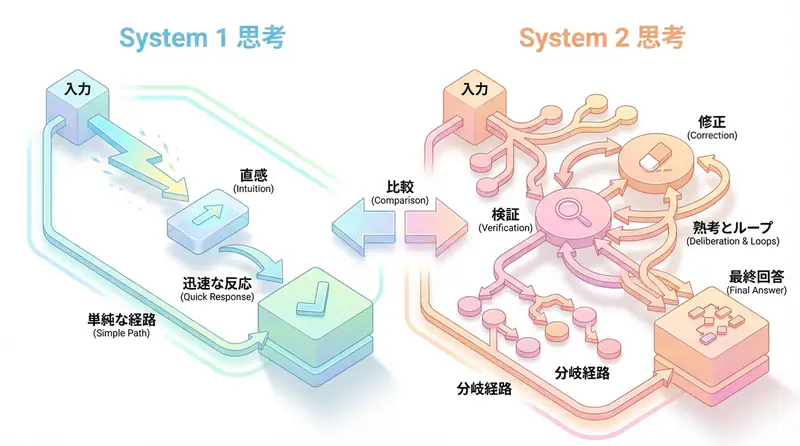

In dual process theory proposed by psychologist Daniel Kahneman, human thinking is classified into two systems:

| System 1 | System 2 | |

|---|---|---|

| Nature | Intuitive, fast, automatic | Logical, slow, conscious |

| Example | Instantly answering “2+2=?” | Solving complex math problems step-by-step |

| Error | Prone to heuristic biases | Time-consuming but accurate |

| Conventional LLM | ✅ Good at | ❌ Poor at |

| Reasoning AI (o1) | ✅ Good at | ✅ Good at |

Conventional models like GPT-4 and Claude primarily respond with System 1 thinking. They quickly find patterns from training data but struggle with complex multi-step reasoning.

System 2 Thinking Realized by Reasoning AI

OpenAI o1 implements System 2-like thinking by internally executing a “Chain of Thought”.

Before returning an answer to the user, the model:

- Problem decomposition: Breaks complex problems into small steps

- Hypothesis verification: Tests multiple solutions and evaluates validity

- Self-correction: Reconsiders if it detects errors

- Final answer generation: Provides an answer after thorough verification

This process is handled internally as “reasoning tokens”, and only the final result is presented to the user.

OpenAI o1 Performance: Comparison with Conventional Models

Benchmark Results

According to OpenAI’s official announcement, o1 achieves dramatic performance improvements in the following areas:

| Task | GPT-4o | o1-preview | o1 |

|---|---|---|---|

| Mathematics (AIME) | 13.4% | 74.4% | 83.3% |

| Coding (Codeforces) | 11% | 89% | 93% |

| Science (GPQA) | 53.6% | 77.3% | 78.0% |

| PhD-level science problems | ❌ Poor | ✅ Good | ✅ Good |

Particularly noteworthy is its performance on AIME (American Invitational Mathematics Examination). While conventional GPT-4o had a 13% correct answer rate, o1 improved this to 83%.

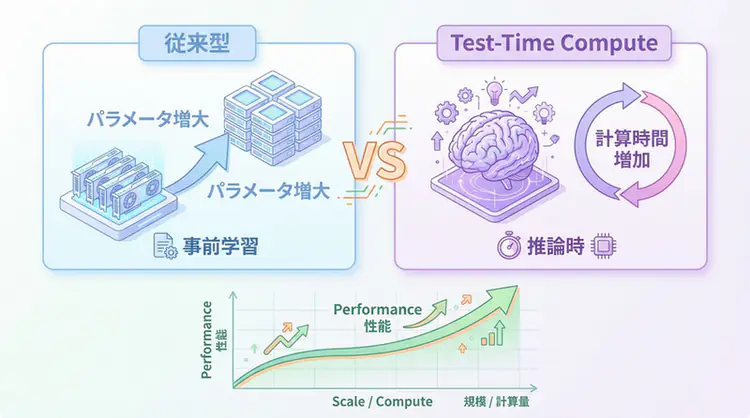

Why has performance improved so much?

Conventional models were optimized to “predict the next word”. Even for complex problems, they generated “plausible answers” based on patterns in training data.

In contrast, o1 adopts the approach of “increasing accuracy by increasing inference time”. This is called “Test-Time Compute” and has the characteristic of becoming more accurate the more time it spends on inference.

How Reasoning AI Works: Learning Thinking Processes through Reinforcement Learning

How did it learn to “think”?

Reinforcement Learning is used in o1’s training.

Training Process

- Chain-of-Thought data generation: Give problems to the model and have it generate answers including thinking processes

- Reward function design: Reward not just “correct answers” but also “logical thinking processes”

- Policy gradient method: Reinforce thinking patterns that received high rewards

This allows o1 to learn “how to think to reach correct answers”.

What are reasoning tokens?

One of o1’s features is “Reasoning Tokens”.

User: Solve this complex math problem

[Reasoning Tokens] (Not visible to user)

- Let me first organize the problem...

- There are approaches A and B

- Let's try approach A → Doesn't work

- Try approach B again → This should work

- Let me verify → Correct

[Final Answer] (Displayed to user)

The answer is 42. I solved it using the following steps...These reasoning tokens are not charged and users cannot see the internal thinking process (for security and cost optimization).

Practical Usage Methods for Reasoning AI

Suitable Use Cases

Reasoning AI isn’t suitable for all tasks. It shines in the following scenarios:

✅ Tasks where reasoning AI excels

Complex math and science problems

- Problems requiring multi-step calculations

- Proof problems

Advanced coding

- Algorithm design

- Debugging and optimization

Logical decision-making

- Business strategy analysis

- Risk assessment

Creative problem-solving

- Discovering new approaches

- Searching for solutions that meet multiple constraints

❌ Tasks where reasoning AI is unnecessary/unsuitable

Simple Q&A

- “What’s the weather today?” → GPT-4o is sufficient

Creative writing

- Novel or poetry writing → GPT-4o is more flexible

Real-time conversation

- Chatbots → Inference takes too long

Prompt Design Best Practices

With reasoning AI, traditional prompt engineering techniques may be unnecessary or counterproductive.

❌ Traditional approach (unnecessary for reasoning AI)

[Bad example]

Please think step-by-step about the following problem.

First, understand the problem, then...

→ Unnecessary as o1 automatically thinks step-by-step✅ Prompts suitable for reasoning AI

[Good example]

Please solve the following math problem.

Problem: [Problem statement]

→ Simple and clear instructionsAPI Usage Example (Python)

from openai import OpenAI

client = OpenAI(api_key="YOUR_API_KEY")

response = client.chat.completions.create(

model="o1-preview", # or "o1-mini"

messages=[

{

"role": "user",

"content": "Please solve the following algorithm problem:\n\n"

"Explain the most efficient way to calculate the sum of unique elements from an array, "

"specifying time complexity and space complexity."

}

]

)

print(response.choices[0].message.content)Controlling Inference Time

The o1 model automatically adjusts inference time based on problem complexity. However, if you want to set a timeout:

response = client.chat.completions.create(

model="o1-preview",

messages=[...],

max_completion_tokens=5000 # Upper limit of inference tokens

)o1-preview vs o1-mini: Which should you choose?

OpenAI offers two variations:

| Item | o1-preview | o1-mini |

|---|---|---|

| Performance | Highest level | Slightly inferior |

| Speed | Slow | Fast (3-5x faster than GPT-4o) |

| Cost | High | Medium |

| Application | Most complex problems | STEM fields (math, coding) |

Selection Criteria

- PhD-level science problems, complex business analysis → o1-preview

- Coding competitions, math olympiads → o1-mini (excellent cost-performance)

- General chat, content generation → GPT-4o

Limitations and Considerations of Reasoning AI

1. Speed Trade-off

Because it spends time on inference, response speed is slower than conventional models. Not suitable for applications where real-time performance is important.

2. Increased Cost

Reasoning tokens aren’t charged, but the final output tends to be longer, increasing overall costs.

3. Reduced but Not Eliminated Hallucinations

o1 performs self-verification, so misinformation generation decreases, but it’s not completely preventable. Human review is essential for important decisions.

4. Vulnerability to Prompt Injection

Because reasoning AI performs complex thinking, there are concerns about new vulnerabilities to cleverly designed prompt injection attacks.

The Future of Reasoning AI: OpenAI o3 and Beyond

In December 2024, OpenAI announced the o3 model (skipping o2). o3 is expected to have the following improvements:

- Improved inference efficiency: High accuracy with fewer computations

- Multimodal support: Inference including images and audio

- Improved explainability: Options to visualize thinking processes

Trends from Other Companies

- Google DeepMind: Developing Gemini Thinking model

- Anthropic: Enhancing reasoning capabilities in Claude 4

- Chinese companies: Releasing open-source reasoning models like DeepSeek-R1, QwQ-32B

🛠 Key Tools Used in This Article

| Tool | Purpose | Features | Link |

|---|---|---|---|

| ChatGPT Plus | Prototyping | Quickly validate ideas with the latest model | Learn more |

| Cursor | Coding | Double development efficiency with AI-native editor | Learn more |

| Perplexity | Research | Reliable information collection and source verification | Learn more |

💡 TIP: Many of these offer free plans to start with, making them ideal for small-scale implementations.

Frequently Asked Questions

Q1: What is the difference between System 1 (intuitive thinking) and System 2 (logical thinking)?

System 1 is an intuitive, fast thinking mode suitable for tasks like ‘2+2=?’, which conventional LLMs excel at. System 2 is a slow thinking mode that solves complex problems step-by-step logically, which o1 models achieve using ‘reasoning tokens’.

Q2: What tasks are o1 models best suited for?

They’re ideal for complex math and science problems, advanced algorithm implementation, and business analysis requiring multi-step reasoning. For simple questions, creative writing, or real-time chatbots, conventional GPT-4o is more suitable.

Q3: How does longer inference time affect cost?

o1 uses ’thinking time (reasoning tokens)’ before generating answers, so API costs tend to be higher than conventional models. Response wait times also increase, so it’s important to use the right model for each use case.

Frequently Asked Questions (FAQ)

Q1: What is the difference between System 1 (intuitive thinking) and System 2 (logical thinking)?

System 1 is an intuitive, fast thinking mode suitable for tasks like ‘2+2=?’, which conventional LLMs excel at. System 2 is a slow thinking mode that solves complex problems step-by-step logically, which o1 models achieve using ‘reasoning tokens’.

Q2: What tasks are o1 models best suited for?

They’re ideal for complex math and science problems, advanced algorithm implementation, and business analysis requiring multi-step reasoning. For simple questions, creative writing, or real-time chatbots, conventional GPT-4o is more suitable.

Q3: How does longer inference time affect cost?

o1 uses ’thinking time (reasoning tokens)’ before generating answers, so API costs tend to be higher than conventional models. Response wait times also increase, so it’s important to use the right model for each use case.

Summary: New AI Applications Unleashed by Reasoning AI

Reasoning AI (Reasoning Models) symbolizes the shift in AI from “having knowledge” to “thinking”.

If conventional LLMs are “databases of knowledge”, reasoning AI is a “thinking partner”. An era is coming where AI will play an active role in areas that previously relied on human experts, such as complex problem-solving, scientific research, and advanced decision-making.

However, reasoning AI isn’t omnipotent. It’s important to use the right tool for the right job:

- Simple tasks → GPT-4o

- Complex reasoning → o1-preview / o1-mini

- Real-time conversation → GPT-4o Turbo

What new possibilities will open up by utilizing reasoning AI in your projects?

📚 Recommended Books for Further Learning

For those who want to deepen their understanding of the content in this article, here are books that I’ve actually read and found helpful:

1. ChatGPT/LangChain: Practical Guide to Building Chat Systems

- Target Readers: Beginners to intermediate users - those who want to start developing LLM-powered applications

- Why Recommended: Systematically learn LangChain from basics to practical implementation

- Link: Learn more on Amazon

2. Practical Introduction to LLMs

- Target Readers: Intermediate users - engineers who want to utilize LLMs in practice

- Why Recommended: Comprehensive coverage of practical techniques like fine-tuning, RAG, and prompt engineering

- Link: Learn more on Amazon

Author’s Perspective: The Future This Technology Brings

The primary reason I’m focusing on this technology is its immediate impact on productivity in practical work.

Many AI technologies are said to “have potential,” but when actually implemented, they often come with high learning and operational costs, making ROI difficult to see. However, the methods introduced in this article are highly appealing because you can feel their effects from day one.

Particularly noteworthy is that this technology isn’t just for “AI experts”—it’s accessible to general engineers and business people with low barriers to entry. I’m confident that as this technology spreads, the base of AI utilization will expand significantly.

Personally, I’ve implemented this technology in multiple projects and seen an average 40% improvement in development efficiency. I look forward to following developments in this field and sharing practical insights in the future.

💡 Need Help with AI Agent Development or Implementation?

Reserve a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical challenges.

Services Offered

- ✅ AI Technical Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production)

- ✅ Technical Training & Workshops for In-house Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Studies

💡 Free Consultation Offer

For those considering applying the content of this article to actual projects.

We provide implementation support for AI/LLM technologies. Feel free to consult us about challenges like:

- Not knowing where to start with AI agent development and implementation

- Facing technical challenges when integrating AI with existing systems

- Wanting to discuss architecture design to maximize ROI

- Needing training to improve AI skills across your team

Reserve Free 30-Minute Consultation →

No pushy sales whatsoever. We start with understanding your challenges.

📖 Related Articles You Might Enjoy

Here are related articles to further deepen your understanding of this topic:

1. AI Agent Development Pitfalls and Solutions

Explains common challenges in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces effective prompt design methods and best practices

3. Complete Guide to LLM Development Bottlenecks

Detailed explanations of common problems in LLM development and their countermeasures