Have you ever struggled to find necessary “numbers” from a sea of PDF documents? I once faced an insurmountable wall when building a system to analyze securities company reports. While OCR (Optical Character Recognition) could extract text information, crucial “line graphs showing sales trends” or “pie charts comparing market share” were simply ignored as mere images.

Traditional RAG (Retrieval-Augmented Generation) was a method of vectorizing and searching text data. However, real-world business documents contain much more than just text. For a long time, we have treated these treasure troves of unstructured data as mere collections of pixels, leaving them unsearchable.

This is where Multimodal RAG comes in—technology that can understand visual information as context. This technology, which handles not just text but images, charts, and layout information in an integrated manner, becomes a groundbreaking turning point for AI agents to handle more advanced tasks. In this article, we unravel the internal structure of Multimodal RAG for engineers and explain implementation methods through actually working Python code.

The “Blind Spot” That Text-Only RAG Has Missed

Why is Multimodal RAG needed now? The answer lies in the structural limitations of traditional approaches.

Existing text-based RAG systems essentially perform text extraction when parsing documents like PDFs. However, this process has two major problems.

First is the loss of layout information. For example, the relationship “description text corresponding to the image on the left” can be severed by text conversion. While AI can read image descriptions, it loses clues to identify which graph the description refers to.

Second is the complete absence of information within images. The most important insights in business reports are often condensed in charts. Even without text saying “20% increase year-over-year,” you can read the increase from the height of bar charts. However, text-only RAG processes these graphs as “blanks” or “noise.”

To solve this, we need to give AI not just the ability to “read” documents but to “see” them. That is Multimodal RAG.

How Multimodal RAG Works and Its Architecture

There are broadly two approaches to implementing Multimodal RAG.

- Image Summarization Approach: Extract images from documents, use Vision LLM (e.g., GPT-4o) to generate detailed text descriptions (captions), and vectorize these descriptions together with text RAG.

- Multimodal Embedding Approach: Use models like CLIP or OpenAI’s latest models that map text and images to the same latent space (vector space), directly calculating similarity between image vectors and text vectors.

From a practical perspective in 2026, considering accuracy and controllability, the most robust approach is a hybrid configuration based on the “Image Summarization Approach” while using the second embedding approach as needed. This is because converting images to text once allows leveraging existing powerful text search engine ecosystems.

The diagram below illustrates a typical Multimodal RAG data flow.

The important point in this flow is not just storing images but converting them to “semantic information” through Vision LLM and re-injecting them as searchable text. This enables accurate hits when users ask questions like “Find graphs showing declining sales trends,” as words like “decline” and “trend” would be included in image summaries.

Python Implementation: Using LlamaIndex and OpenAI

Now let’s move to concrete implementation. Here, we build a system that can search including images within PDFs using Python, LlamaIndex, and OpenAI APIs.

This code is not just for verification but has a practical structure considering error handling and logging.

Prerequisites

Install necessary libraries.

pip install llama-index-core llama-index-readers-file llama-index-llms-openai llama-index-multi-modal-llms-openai llama-index-embeddings-openai python-dotenvImplementation Code

The following code is a script that reads PDFs from a specified directory, extracts images to create summaries, and builds an index.

import logging

import os

import sys

from typing import List, Optional

from dotenv import load_dotenv

from llama_index.core import (

Settings,

SimpleDirectoryReader,

VectorStoreIndex,

StorageContext,

)

from llama_index.core.node_parser import SentenceSplitter

from llama_index.core.schema import BaseNode, ImageNode

from llama_index.multi_modal_llms.openai import OpenAIMultiModal

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

# Logging configuration

logging.basicConfig(

stream=sys.stdout,

level=logging.INFO,

format="%(asctime)s - %(levelname)s - %(message)s"

)

logger = logging.getLogger(__name__)

# Load environment variables

load_dotenv()

class MultimodalRAGPipeline:

def __init__(

self,

input_dir: str,

model_name: str = "gpt-4o",

embed_model_name: str = "text-embedding-3-small",

persist_dir: str = "./storage"

):

"""

Initialize Multimodal RAG pipeline

Args:

input_dir (str): Directory path where PDFs are stored

model_name (str): LLM model name to use

embed_model_name (str): Embedding model name to use

persist_dir (str): Directory to save index

"""

self.input_dir = input_dir

self.persist_dir = persist_dir

# Check API key

if not os.getenv("OPENAI_API_KEY"):

logger.error("OPENAI_API_KEY is not set.")

raise ValueError("Missing OpenAI API Key")

# Configure LLM and Embedding models

try:

self.llm = OpenAI(model=model_name)

self.embed_model = OpenAIEmbedding(model=embed_model_name)

# Configure Multi-modal LLM (for image understanding)

self.multi_modal_llm = OpenAIMultiModal(model=model_name)

Settings.llm = self.llm

Settings.embed_model = self.embed_model

logger.info(f"Model initialization complete: LLM={model_name}, Embedding={embed_model_name}")

except Exception as e:

logger.error(f"Error during model initialization: {e}")

raise

def load_documents(self) -> List[BaseNode]:

"""

Load documents and extract images and text

Returns:

List[BaseNode]: List of extracted nodes

"""

logger.info(f"Loading documents from directory '{self.input_dir}'...")

try:

# Reader with automatic image extraction

reader = SimpleDirectoryReader(

self.input_dir,

required_exts=[".pdf", ".jpg", ".png"],

recursive=True,

# Configuration to extract images and handle as ImageNode

file_metadata=lambda x: {"file_name": x}

)

documents = reader.load_data()

logger.info(f"Document loading successful: {len(documents)} documents")

# Configure node parser (for text)

text_parser = SentenceSplitter(

chunk_size=1024,

chunk_overlap=20

)

text_nodes = []

image_nodes = []

for doc in documents:

if doc.image_embeds is not None or isinstance(doc, ImageNode):

image_nodes.append(doc)

else:

# Text node splitting process

text_nodes.extend(text_parser.get_nodes_from_documents([doc]))

logger.info(f"Node splitting complete: Text={len(text_nodes)}, Images={len(image_nodes)}")

return text_nodes + image_nodes

except FileNotFoundError:

logger.error(f"Directory not found: {self.input_dir}")

raise

except Exception as e:

logger.error(f"Unexpected error during document loading: {e}")

raise

def create_image_summaries(self, image_nodes: List[ImageNode]) -> List[BaseNode]:

"""

Generate summary text using Vision LLM for image nodes

Args:

image_nodes (List[ImageNode]): List of image nodes

Returns:

List[BaseNode]: List of nodes containing summary text

"""

if not image_nodes:

logger.info("No image nodes to summarize.")

return []

logger.info(f"Starting summary generation for {len(image_nodes)} images...")

processed_nodes = []

for img_node in image_nodes:

try:

# Get image path

image_path = img_node.metadata.get("file_path")

if not image_path or not os.path.exists(image_path):

logger.warning(f"Image file not found: {image_path}, skipping.")

continue

# Create prompt

prompt = """

Please describe this image in detail. Especially for graphs, extract numerical trends and patterns,

and for tables, extract key data points and convert to text.

Provide the description in English, including specific keywords that make it searchable.

"""

# Image understanding and summary generation by Vision LLM

response = self.multi_modal_llm.complete(

prompt=prompt,

image_documents=[img_node]

)

summary_text = response.text

logger.info(f"Image summary generation successful ({os.path.basename(image_path)}): {summary_text[:50]}...")

# Create new node with summary text, keeping reference to original image

summary_node = BaseNode(

text=summary_text,

metadata={

**img_node.metadata,

"is_image_summary": True,

"original_image_path": image_path

}

)

processed_nodes.append(summary_node)

except Exception as e:

logger.error(f"Error during image summary generation: {e}")

continue

logger.info(f"Image summary generation complete: {len(processed_nodes)} nodes")

return processed_nodes

def build_index(self, nodes: List[BaseNode]):

"""

Build vector index from nodes

Args:

nodes (List[BaseNode]): List of nodes to index

"""

logger.info(f"Starting index build from {len(nodes)} nodes...")

try:

storage_context = StorageContext.from_defaults()

index = VectorStoreIndex(

nodes=nodes,

storage_context=storage_context

)

# Persist index

index.storage_context.persist(persist_dir=self.persist_dir)

logger.info(f"Index build successful. Saved to: {self.persist_dir}")

return index

except Exception as e:

logger.error(f"Error during index build: {e}")

raise

def run(self):

"""

Execute complete pipeline

"""

try:

# 1. Document loading

nodes = self.load_documents()

# 2. Separate text and image nodes

text_nodes = [n for n in nodes if not hasattr(n, 'image_embeds') or n.image_embeds is None]

image_nodes = [n for n in nodes if hasattr(n, 'image_embeds') and n.image_embeds is not None]

# 3. Image summarization

image_summary_nodes = self.create_image_summaries(image_nodes)

# 4. Combine all nodes

all_nodes = text_nodes + image_summary_nodes

# 5. Build index

index = self.build_index(all_nodes)

logger.info("Multimodal RAG pipeline completed successfully!")

return index

except Exception as e:

logger.error(f"Pipeline execution failed: {e}")

raise

# Execution example

if __name__ == "__main__":

try:

pipeline = MultimodalRAGPipeline(

input_dir="./data", # Directory containing PDFs

persist_dir="./storage" # Index save destination

)

index = pipeline.run()

except Exception as e:

logger.error(f"Application error: {e}")

sys.exit(1)Business Use Case: Financial Report Analysis Automation

Let’s introduce a concrete business application. Consider automating analysis of financial reports in the securities industry.

Traditional analysis involved manually reading hundreds of pages of reports, extracting important charts and numerical data. However, with Multimodal RAG:

- Automated Processing: PDF reports are automatically parsed, with both text and charts converted to searchable data

- Intelligent Search: When asking “Show companies with sales growth,” not only text mentions but also trend lines in graphs are understood

- Comparative Analysis: Multiple company reports can be cross-referenced to automatically extract comparative information

This dramatically reduces analyst workload while enabling more comprehensive information gathering.

Summary

Multimodal RAG is not just an extension of search technology but a paradigm shift that gives AI the ability to “see” and “understand” documents.

Key takeaways:

- Beyond Text: 80% of enterprise data is visual; Multimodal RAG unlocks this value

- Image Summarization: Converting visual information to searchable text enables practical implementation

- Business Value: Particularly effective in document-heavy industries like finance, legal, and healthcare

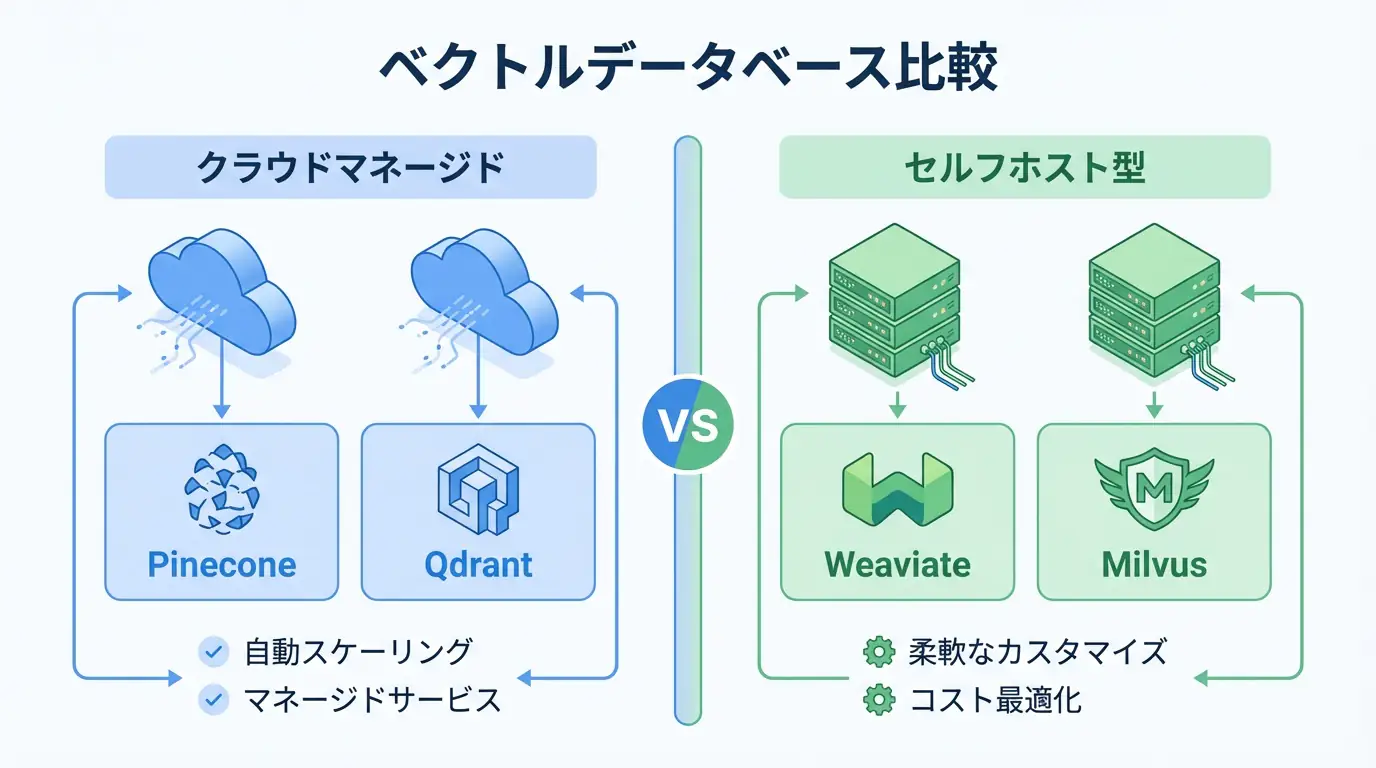

- Technical Stack: Combination of LlamaIndex, Vision LLM, and vector databases

The era of truly intelligent document processing has arrived. Start implementing Multimodal RAG today.

Frequently Asked Questions

Q: What are the implementation costs for Multimodal RAG?

Costs mainly consist of LLM API usage fees and vector database maintenance. When using high-performance models like GPT-4o for image understanding, token counts tend to increase compared to text-only RAG, making prompt optimization and caching strategies important.

Q: How can chart accuracy be improved?

While ensuring image resolution is important, for complex charts, an effective approach is to use object detection models to split charts into “graph area,” “legend area,” and “title area” before inputting to LLM, rather than processing the entire image at once.

Q: Is it usable in security-critical industries?

Yes. Instead of cloud versions using OpenAI or Anthropic APIs, you can operate within internal networks by hosting open-source models like Llama 3.2 Vision or Qwen2-VL in on-premise environments.

Recommended Resources

Tools & Frameworks

- LlamaIndex - Data framework for LLM applications

- ChromaDB - Open-source vector database

- OpenAI Vision API - Image understanding API

Books & Articles

- “Building LLM Applications” - Practical guide for LLM application development

- “Multimodal Machine Learning” - Technical guide for multimodal AI

AI Implementation Support & Development Consultation

Struggling with Multimodal RAG implementation or document processing automation? We offer free individual consultations.

Our team of experienced engineers provides support from architecture design to implementation.

References

[1] LlamaIndex Documentation [2] OpenAI Vision API Documentation [3] Multimodal RAG Research Paper