Introduction: Why Does LLM Development Fail?

While LLM (Large Language Model) development is accelerating in 2025, many projects are struggling. According to Gartner research, 85% of AI projects fail to deliver expected results. Why does this happen?

In this article, we explain 7 common failure patterns in LLM development and specific solutions based on practical experience.

7 Common Failure Patterns and Solutions

1. Unclear Requirements Definition

Problem: Starting development without clarifying what problems LLM should solve

Solution:

- Clearly define success metrics (KPIs) before starting

- Quantify expected effects (e.g., “reduce customer support response time by 30%”)

- Create a simple prototype to validate assumptions

2. Inadequate Data Preprocessing

Problem: Poor quality training data or retrieval documents leading to degraded output quality

Solution:

- Implement data cleaning pipelines

- Remove duplicates and noise

- Add appropriate metadata

- Validate data quality before training

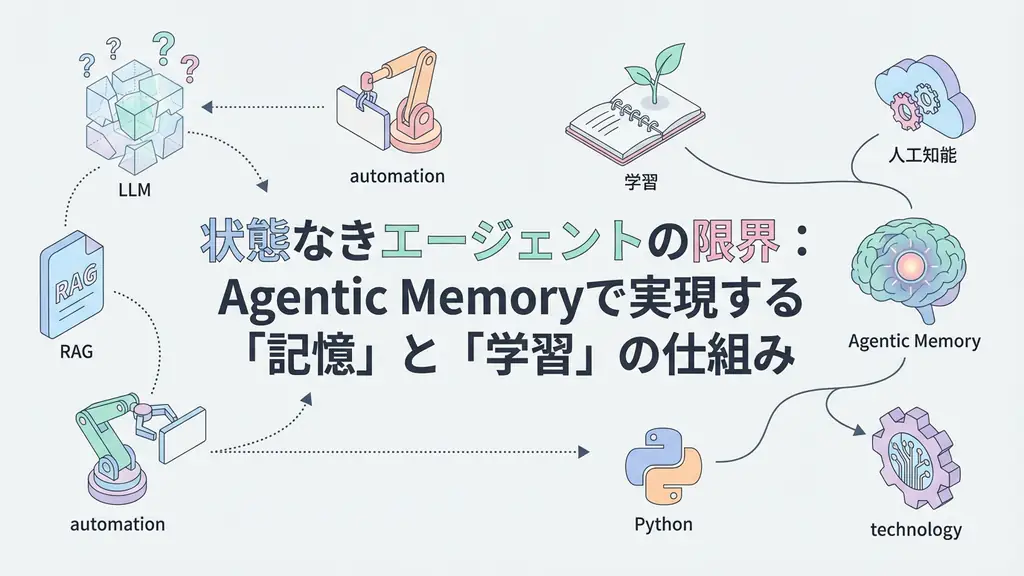

3. Hallucination Issues

Problem: LLM generating plausible but incorrect information

Solution:

- Implement RAG to provide context

- Add fact-checking layers

- Use lower temperature for factual tasks

- Include source citations in outputs

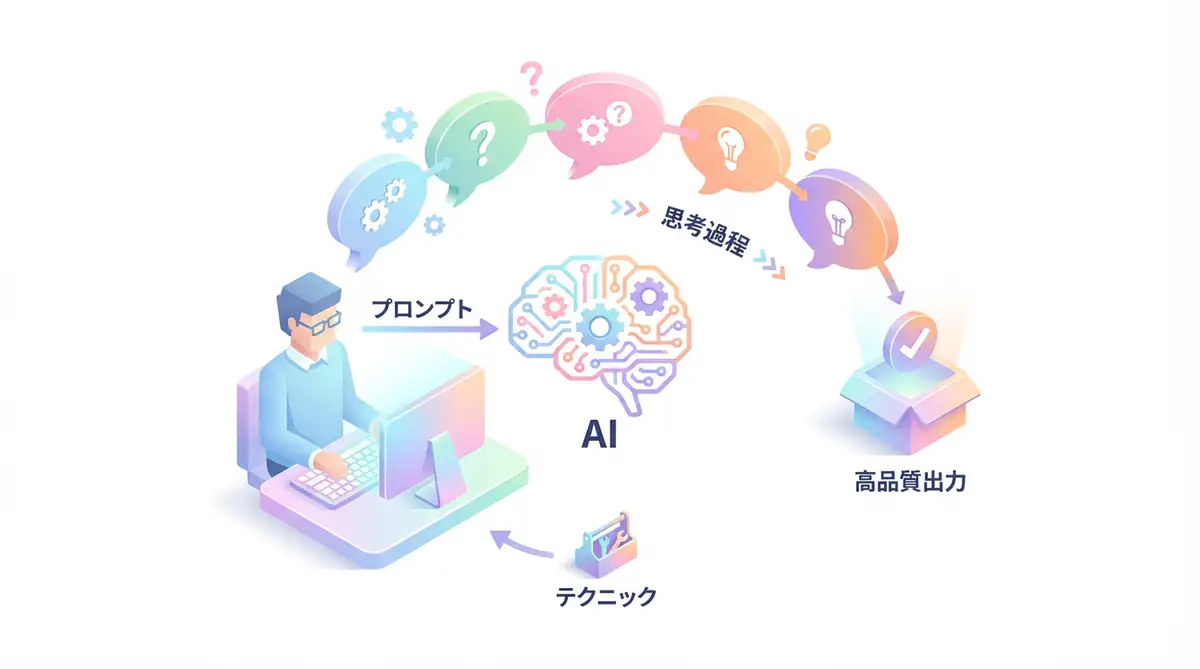

4. Poor Prompt Engineering

Problem: Vague prompts leading to inconsistent outputs

Solution:

- Use structured prompts with clear instructions

- Include few-shot examples

- Implement prompt versioning

- A/B test different prompt variations

5. Inadequate Evaluation

Problem: No proper evaluation framework to measure quality

Solution:

- Define evaluation metrics (accuracy, relevance, safety)

- Create test datasets

- Implement automated evaluation pipelines

- Include human evaluation for critical tasks

6. Scalability Issues

Problem: Architecture that works for prototypes fails in production

Solution:

- Design for horizontal scaling from the start

- Implement caching strategies

- Use efficient vector databases

- Monitor resource usage

7. Security and Privacy Risks

Problem: Sensitive data leakage or prompt injection attacks

Solution:

- Implement input sanitization

- Use data masking for PII

- Add rate limiting

- Regular security audits

Best Practices Summary

Summary

- Start with clear requirements and KPIs

- Invest in data quality and preprocessing

- Use RAG before considering fine-tuning

- Implement proper evaluation frameworks

- Design for production scalability

- Prioritize security and privacy

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| LangChain | LLM Development | Framework for building LLM applications | View Details |

| Pinecone | Vector Search | Scalable vector database for RAG | View Details |

| Weights & Biases | Experiment Tracking | Monitor and compare LLM experiments | View Details |

FAQ

Q1: What is the most common cause of LLM development failure?

The biggest cause is “unclear requirements definition.” Many projects proceed without clarifying what problems LLM should solve, resulting in wasted investment.

Q2: How can we reduce hallucinations in LLM?

Key measures include RAG, prompt engineering, temperature adjustment, and post-processing fact-checking. Combining multiple approaches is most effective.

Q3: What is the difference between fine-tuning and RAG?

Fine-tuning modifies the model itself, while RAG retrieves information from external databases. Generally, start with RAG and consider fine-tuning only when necessary.

Summary

LLM development requires more than just calling APIs. Success comes from systematic approaches covering requirements definition, data preparation, prompt engineering, evaluation, and production deployment.

📚 Recommended Books

1. LLM Practical Introduction

- Target Audience: Intermediate engineers

- Why Recommended: Covers fine-tuning, RAG, and prompt engineering

- Link: Amazon

💡 Free Consultation

Need help with LLM development? Book a free 30-minute consultation.