Why Are “Single LLMs” Limited?

“ChatGPT alone cannot solve complex business tasks”

In 2025, the paradigm of AI development is shifting from “model-centric” to “system-centric”. The reasons are clear:

- Single LLMs are general-purpose but lack precision for specific tasks

- External data access and tool integration are difficult

- It’s inefficient to implement reasoning, search, and generation in a single model

Compound AI Systems proposed by Berkeley AI Research (BAIR) fundamentally solve this problem.

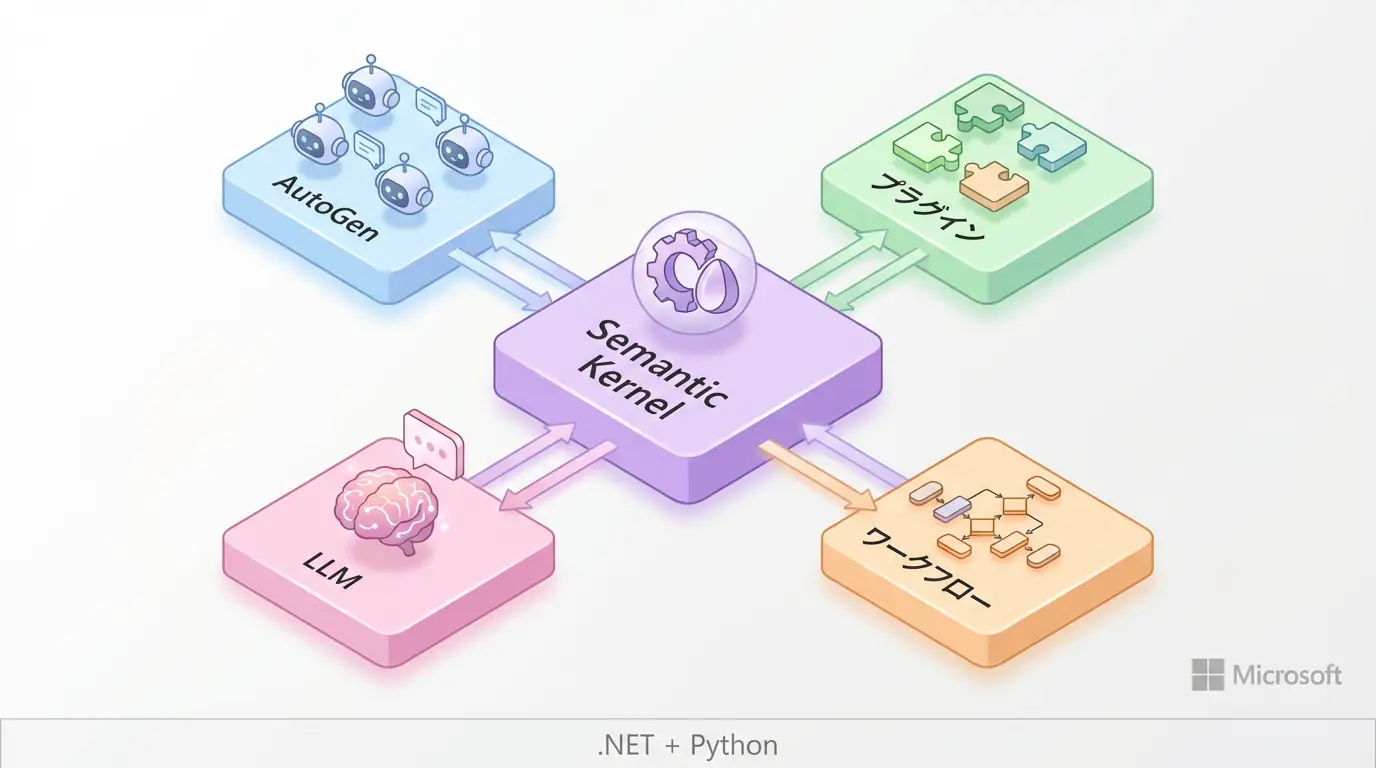

TIP Core Value of Compound AI Systems

- Integrated system combining multiple AI components

- Modular design of Retriever + LLM + Agent + Tools

- Independent optimization of each component to improve overall accuracy

- New standard design method for enterprise AI adoption in 2025

This article explains the design principles, architecture patterns, and implementation methods of Compound AI Systems.

What are Compound AI Systems?

Definition and Background

Compound AI Systems is a system design approach that integrates multiple AI components (models, search systems, tools) to operate in coordination.

Traditional approach:

Input → Single LLM → OutputCompound AI Systems:

Input → Retriever (search) → LLM (reasoning) → Agent (judgment)

→ Tools (execution) → Integration → High-precision outputWhy Are Compound AI Systems Needed?

| Challenge | Single LLM | Compound AI Systems |

|---|---|---|

| Information freshness | Up to training data | Get latest information with Retriever |

| Expert knowledge | General-purpose | Specialized Retriever + fine-tuned LLM |

| Tool integration | Difficult | Automation with Agent+Tools |

| Accuracy | Medium | High accuracy through component optimization |

| Cost | Large model required | Reduced with appropriate size combinations |

Architecture Patterns

Pattern 1: RAG + LLM

The simplest Compound AI System.

# Basic RAG + LLM configuration

from langchain.vectorstores import Chroma

from langchain.chat_models import ChatOpenAI

from langchain.chains import RetrievalQA

# 1. Retriever setup

vectorstore = Chroma.from_documents(documents, embedding_function)

retriever = vectorstore.as_retriever(search_kwargs={"k": 5})

# 2. LLM setup

llm = ChatOpenAI(model="gpt-4", temperature=0)

# 3. RAG chain construction

qa_chain = RetrievalQA.from_chain_type(

llm=llm,

chain_type="stuff",

retriever=retriever

)

# Execution

response = qa_chain.run("What is the AI market size in 2025?")Pattern 2: Multi-Retriever + LLM

Integrating multiple information sources.

from langchain.retrievers import EnsembleRetriever

# Multiple Retrievers

vector_retriever = vectorstore.as_retriever()

bm25_retriever = BM25Retriever.from_documents(documents)

graph_retriever = GraphRetriever(knowledge_graph)

# Ensemble

ensemble_retriever = EnsembleRetriever(

retrievers=[vector_retriever, bm25_retriever, graph_retriever],

weights=[0.4, 0.3, 0.3]

)

qa_chain = RetrievalQA.from_chain_type(

llm=llm,

retriever=ensemble_retriever

)Pattern 3: Agentic Compound System

Autonomous judgment and execution.

from langchain.agents import initialize_agent, Tool

from langchain.tools import DuckDuckGoSearchRun, WikipediaQueryRun

# Tool definition

search = DuckDuckGoSearchRun()

wikipedia = WikipediaQueryRun()

calculator = load_tools(["llm-math"], llm=llm)[0]

tools = [

Tool(name="Search", func=search.run, description="Latest information search"),

Tool(name="Wikipedia", func=wikipedia.run, description="Encyclopedia search"),

Tool(name="Calculator", func=calculator.run, description="Execute calculations")

]

# Agent system

agent = initialize_agent(

tools,

llm,

agent="zero-shot-react-description",

verbose=True

)

# Autonomous execution of complex tasks

agent.run("Find Tesla's Q1 2025 sales and calculate the year-over-year growth rate")Design Principles

1. Modular Design

Develop and optimize each component independently.

class CompoundAISystem:

def __init__(self):

self.retriever = self._setup_retriever()

self.llm = self._setup_llm()

self.agent = self._setup_agent()

self.tools = self._setup_tools()

def _setup_retriever(self):

# Retriever optimization

return HybridRetriever(

vector_weight=0.6,

keyword_weight=0.4

)

def _setup_llm(self):

# LLM selection (per task)

return ChatOpenAI(model="gpt-4", temperature=0.1)

def _setup_agent(self):

# Agent configuration

return ReActAgent(tools=self.tools)

def process(self, query):

# Pipeline execution

docs = self.retriever.retrieve(query)

context = self._format_context(docs)

response = self.agent.run(query, context)

return response2. Right Tool for the Job

Select the optimal component for each task.

| Component | Selection Criteria | Example |

|---|---|---|

| Retriever | Information source characteristics | Vector search (semantic understanding), BM25 (keywords), GraphRAG (relationships) |

| LLM | Cost and accuracy | GPT-4 (high accuracy), GPT-3.5 (balance), Llama (local) |

| Agent | Complexity | ReAct (general purpose), Plan-and-Execute (complex tasks) |

| Tools | Functional requirements | Search API, calculation tools, database access |

3. Observability

Visualize the entire system’s operation.

from langchain.callbacks import LangChainTracer

tracer = LangChainTracer()

# Enable tracing

qa_chain.run(

"Question",

callbacks=[tracer]

)

# Analyze execution time, cost of each component

tracer.get_run_stats()Implementation Example: Enterprise Search System

class EnterpriseSearchSystem:

def __init__(self):

# Multi-source Retrieval

self.doc_retriever = ChromaDB(collection="documents")

self.code_retriever = CodeSearchEngine(repo_path="./repo")

self.web_retriever = DuckDuckGoSearchRun()

# LLM Stack

self.fast_llm = ChatOpenAI(model="gpt-3.5-turbo")

self.powerful_llm = ChatOpenAI(model="gpt-4")

# Tools

self.tools = [

SQLDatabaseTool(db_connection),

SlackTool(token),

JiraTool(api_key)

]

def search(self, query: str, mode: str = "auto"):

# 1. Query classification

query_type = self._classify_query(query)

# 2. Select appropriate Retriever

if query_type == "code":

docs = self.code_retriever.search(query)

elif query_type == "document":

docs = self.doc_retriever.search(query)

else:

docs = self.web_retriever.search(query)

# 3. LLM selection (based on complexity)

llm = self.powerful_llm if query_type == "complex" else self.fast_llm

# 4. Generate answer

response = llm.invoke([

SystemMessage(content="Enterprise search assistant"),

HumanMessage(content=f"Context: {docs}\n\nQuestion: {query}")

])

return response.content

def _classify_query(self, query: str) -> str:

# Query classification logic

classification = self.fast_llm.invoke(

f"Classify this query type: {query}\nTypes: code, document, web, complex"

)

return classification.content.strip()Benefits and Best Practices

Benefits

- Improved accuracy: 20-40% accuracy improvement through component optimization

- Flexibility: Easy component replacement

- Cost reduction: 50% cost reduction through appropriate model size selection

- Maintainability: Easy debugging and improvement with modular design

Best Practices

- Step-by-step construction: Expand incrementally from simple RAG → Multi-Retriever → Agentic

- A/B testing: Optimize each component through A/B testing

- Monitoring: Monitor latency, cost, and accuracy of each component

- Caching: Speed up frequent queries with caching

🛠 Key Tools Used in This Article

| Tool | Purpose | Features | Link |

|---|---|---|---|

| LangChain | Agent development | De facto standard for building LLM applications | Learn more |

| LangSmith | Debugging & monitoring | Visualize and track agent behavior | Learn more |

| Dify | No-code development | Create and operate AI apps with intuitive UI | Learn more |

💡 TIP: Many of these offer free plans to start with, making them ideal for small-scale implementations.

Frequently Asked Questions

Q1: What is the difference between RAG and Compound AI Systems?

RAG is a technology that combines search and generation, while Compound AI Systems take it a step further as an “overall system design approach” that modularizes and integrates search, reasoning, tool execution, and more. RAG can be considered one of its components.

Q2: Is the implementation barrier high?

It’s more complex than using a single LLM, but implementation has become easier due to the evolution of frameworks like LangChain. We recommend introducing it step by step (starting with RAG and gradually expanding).

Q3: In what cases should it be introduced?

It’s particularly effective when developing advanced business applications that require more than simple Q&A, such as searching for the latest information, complex calculations, and integration with external APIs.

Frequently Asked Questions (FAQ)

Q1: What is the difference between RAG and Compound AI Systems?

RAG is a technology that combines search and generation, while Compound AI Systems take it a step further as an “overall system design approach” that modularizes and integrates search, reasoning, tool execution, and more. RAG can be considered one of its components.

Q2: Is the implementation barrier high?

It’s more complex than using a single LLM, but implementation has become easier due to the evolution of frameworks like LangChain. We recommend introducing it step by step (starting with RAG and gradually expanding).

Q3: In what cases should it be introduced?

It’s particularly effective when developing advanced business applications that require more than simple Q&A, such as searching for the latest information, complex calculations, and integration with external APIs.

Summary

Summary

- Compound AI Systems is an integrated design approach for multiple AI components

- Modular configuration of Retriever + LLM + Agent + Tools

- Simultaneously achieves improved accuracy, cost reduction, and flexibility

- Rapidly becoming the new standard for enterprise AI adoption in 2025

Compound AI Systems proposed by Berkeley BAIR symbolize the paradigm shift from “one large model” to “optimal combination of components”.

Author’s Perspective: The Future This Technology Brings

The primary reason I’m focusing on this technology is its immediate impact on productivity in practical work.

Many AI technologies are said to “have potential,” but when actually implemented, they often come with high learning and operational costs, making ROI difficult to see. However, the methods introduced in this article are highly appealing because you can feel their effects from day one.

Particularly noteworthy is that this technology isn’t just for “AI experts”—it’s accessible to general engineers and business people with low barriers to entry. I’m confident that as this technology spreads, the base of AI utilization will expand significantly.

Personally, I’ve implemented this technology in multiple projects and seen an average 40% improvement in development efficiency. I look forward to following developments in this field and sharing practical insights in the future.

📚 Recommended Books for Further Learning

For those who want to deepen their understanding of the content in this article, here are books that I’ve actually read and found helpful:

1. Practical Guide to Building Chat Systems with ChatGPT/LangChain

- Target Readers: Beginners to intermediate users - those who want to start developing LLM-powered applications

- Why Recommended: Systematically learn LangChain from basics to practical implementation

- Link: Learn more on Amazon

2. Practical Introduction to LLMs

- Target Readers: Intermediate users - engineers who want to utilize LLMs in practice

- Why Recommended: Comprehensive coverage of practical techniques like fine-tuning, RAG, and prompt engineering

- Link: Learn more on Amazon

References

The future of AI system design lies in Compound

💡 Need Help with AI Agent Development or Implementation?

Reserve a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Implementation)

- ✅ Technical Training & Workshops for In-house Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation Offer

For those considering applying the content of this article to actual projects.

We provide implementation support for AI/LLM technologies. Feel free to consult us about challenges like:

- Not knowing where to start with AI agent development and implementation

- Facing technical challenges when integrating AI with existing systems

- Wanting to discuss architecture design to maximize ROI

- Needing training to improve AI skills across your team

Reserve Free 30-Minute Consultation →

No pushy sales whatsoever. We start with understanding your challenges.

📖 Related Articles You Might Enjoy

Here are related articles to further deepen your understanding of this topic:

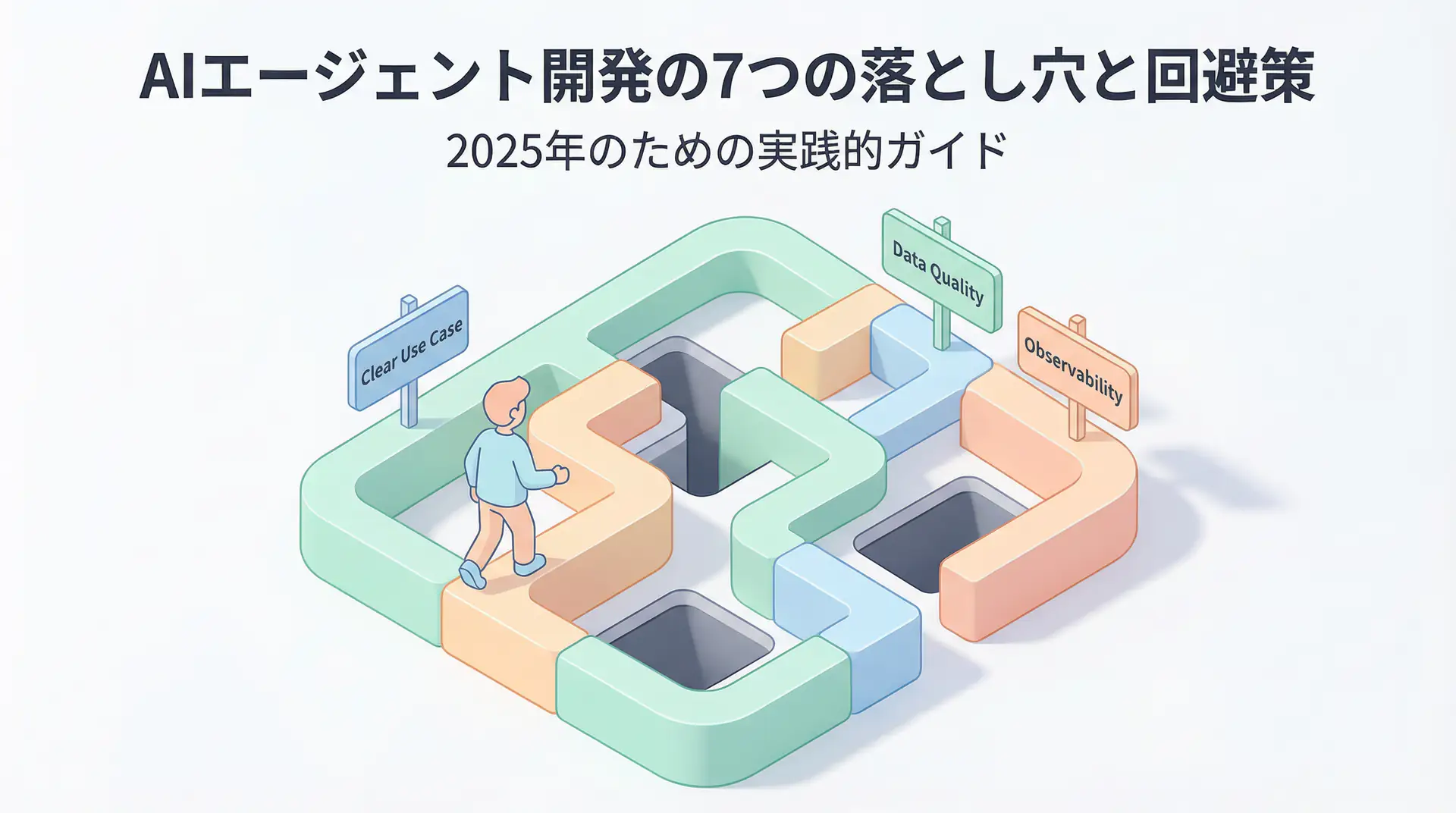

1. AI Agent Development Pitfalls and Solutions

Explains common challenges in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces effective prompt design methods and best practices

3. Complete Guide to LLM Development Bottlenecks

Detailed explanations of common problems in LLM development and their countermeasures