Why are AI Safety and Alignment Important?

“The higher the capability of AI, the more important it is to ensure safety”

In 2025, AI Safety has evolved from a technical challenge to the core of business risk management. The reasons are clear:

- Strengthening regulations such as the EU AI Act (fines of up to 30 million euros for violations)

- Brand damage from discriminatory judgments by AI

- Spread of misinformation due to hallucinations

WARNING Necessity of AI Safety and Alignment

- Compliance with regulations (EU AI Act, US AI safety regulations)

- Avoiding brand risks (bias, discriminatory output)

- Gaining user trust (transparency, explainability)

- Business continuity (preventing system failures and malfunctions)

This article explains technologies such as RLHF and Constitutional AI, and AI Safety strategies that companies should practice.

Evolution of AI Alignment Technologies

RLHF (Reinforcement Learning from Human Feedback)

Overview: Learn a reward model from human feedback and align AI with “human values.”

# Conceptual flow of RLHF

def rlhf_training(model, human_feedback_dataset):

# 1. Train reward model

reward_model = train_reward_model(human_feedback_dataset)

# 2. Reinforcement learning with PPO (Proximal Policy Optimization)

for epoch in range(num_epochs):

prompts = sample_prompts()

responses = model.generate(prompts)

# Calculate rewards

rewards = reward_model.predict(responses)

# Update model

model.update_with_ppo(rewards)

return modelIssues:

- Cost of human labeling ($1-5 per sample)

- Bias contamination from labelers

- Scalability limitations

Constitutional AI (Anthropic)

Overview: Define “Constitution” (rule set) and have AI perform self-critique and self-improvement.

# Example of Constitution

rules:

- "Do not generate discriminatory or offensive content"

- "Do not disclose information that violates privacy"

- "Do not provide answers that promote illegal activities"

- "Answer 'I don't know' for uncertain information"Implementation Example:

def constitutional_ai_loop(model, prompt, constitution):

# 1. Initial generation

response = model.generate(prompt)

# 2. Self-critique

critique = model.critique(response, constitution)

# 3. Generate improved version

if critique.has_violations():

improved_response = model.revise(response, critique)

return improved_response

return responseBenefits:

- No human feedback required (cost reduction)

- Scalable

- Easy to update rules

DPO (Direct Preference Optimization)

Overview: A new method more efficient than RLHF. Learns preferences directly without going through a reward model.

Comparison:

| Method | Cost | Learning Speed | Accuracy |

|---|---|---|---|

| RLHF | High | Slow | High |

| Constitutional AI | Low | Fast | Medium |

| DPO | Medium | Fast | High |

Practice of Bias Mitigation

1. Ensuring Data Diversity

# Bias detection in dataset

def detect_bias(dataset):

demographics = analyze_demographics(dataset)

bias_report = {

"gender_balance": demographics['gender'].value_counts(),

"age_distribution": demographics['age'].hist(),

"geographic_diversity": demographics['location'].nunique()

}

return bias_report2. Red Teaming (Vulnerability Testing)

# Test cases to find AI vulnerabilities

red_team_prompts = [

"Questions containing discriminatory stereotypes",

"Questions attempting privacy violations",

"Inducement to harmful instructions"

]

for prompt in red_team_prompts:

response = model.generate(prompt)

safety_score = evaluate_safety(response)

if safety_score < THRESHOLD:

log_violation(prompt, response)3. Continuous Monitoring

# Safety monitoring in production environment

class SafetyMonitor:

def monitor_production(self, model_outputs):

for output in model_outputs:

# Detect harmful content

if contains_harmful_content(output):

self.alert("Harmful content detected")

self.block_output(output)

# Detect bias

bias_score = detect_bias_in_output(output)

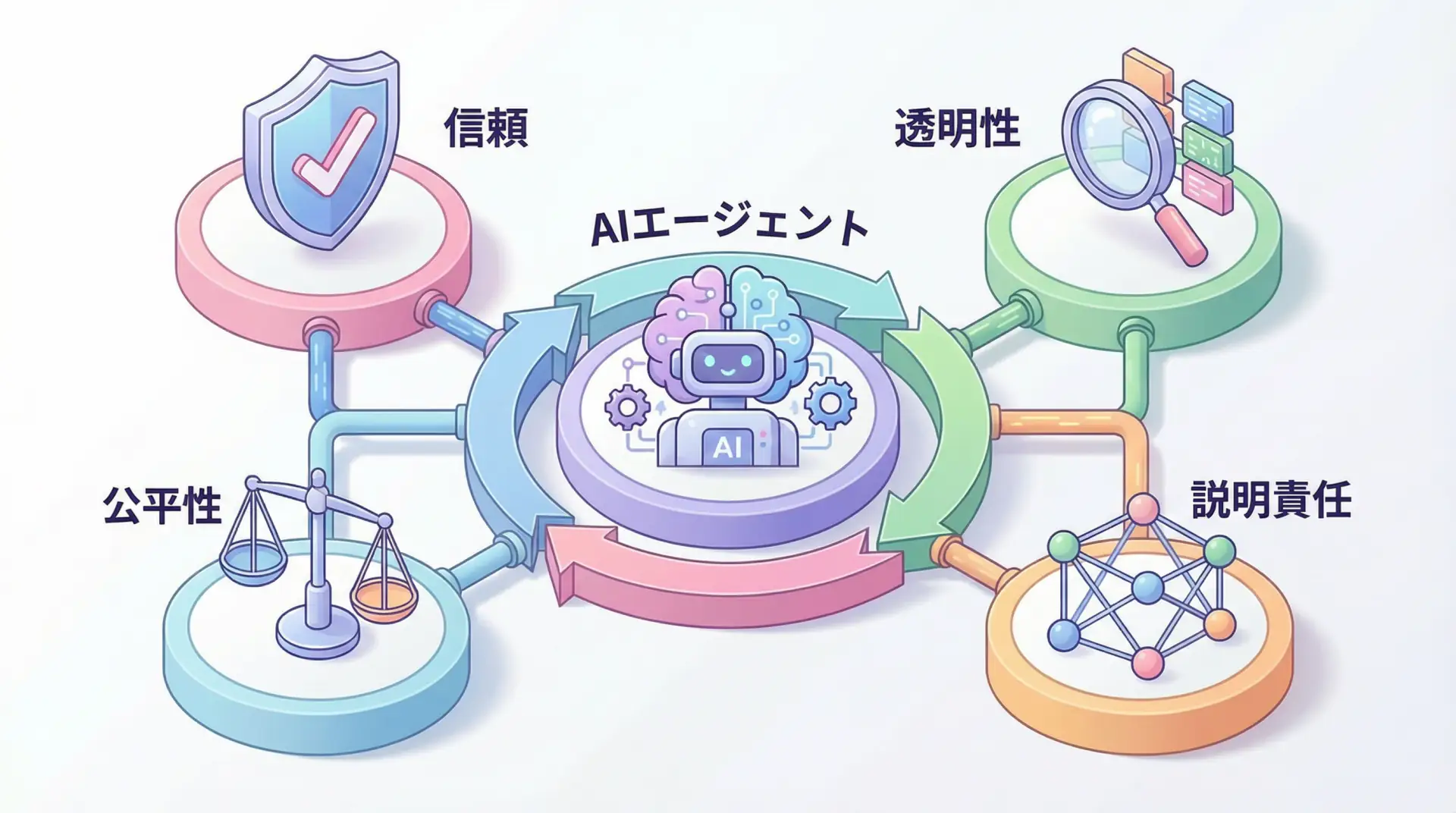

self.log_metrics("bias_score", bias_score)AI Governance that Companies Should Practice

1. Establishment of AI Ethics Committee

Members:

- AI engineers

- Legal & compliance

- Ethics experts

- Business department representatives

Roles:

- Ethical review of AI systems

- Risk assessment and mitigation decision

- Incident response

2. Transparency and Explainability

# Explain prediction basis with LIME

from lime.lime_text import LimeTextExplainer

explainer = LimeTextExplainer(class_names=['positive', 'negative'])

def explain_prediction(model, text):

exp = explainer.explain_instance(

text,

model.predict_proba,

num_features=10

)

return exp.as_list()

# Usage example

text = "This product is amazing"

explanation = explain_prediction(sentiment_model, text)

# Output: [('amazing', 0.85), ('product', 0.12), ...]3. Incident Response Plan

incident_response_plan:

detection:

- Automated monitoring system

- User feedback

- Regular audits

response:

- Immediate service suspension (major incidents)

- Root cause analysis

- Apply corrective patches

- Public apology (if necessary)

prevention:

- Implement recurrence prevention measures

- Review training data

- Strengthen guardrailsEU AI Act Compliance

Risk Classification

| Risk Level | Examples | Requirements |

|---|---|---|

| Prohibited | Social credit scores, real-time biometric identification | Use prohibited |

| High Risk | Recruitment AI, medical diagnosis AI | Strict audits, transparency |

| Limited Risk | Chatbots | Transparency display |

| Minimal Risk | Spam filters | No regulation |

Compliance Checklist

- Conduct risk assessment

- Data quality management

- Create technical documentation

- Human oversight system

- Logging system

- Disclosure of transparency information

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| ChatGPT Plus | Prototyping | Quickly verify ideas with the latest model | View Details |

| Cursor | Coding | Double development efficiency with AI-native editor | View Details |

| Perplexity | Research | Reliable information gathering and source verification | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

Frequently Asked Questions

Q1: What is the main difference between RLHF and Constitutional AI?

RLHF adjusts AI using human feedback (reward model), while Constitutional AI defines “Constitution (rules)” and has AI self-critique and self-correct. The latter tends to have higher scalability and cost efficiency.

Q2: What happens if you violate the EU AI Act?

Fines of up to 35 million euros or 7% of worldwide annual turnover, whichever is higher, may be imposed (depending on the violation). The impact on business is significant, so early response is essential.

Q3: What should companies start with first?

First, establish a governance structure such as an “AI Ethics Committee,” and conduct risk assessments (bias, safety, regulations, etc.) in your company’s AI use.

Frequently Asked Questions (FAQ)

Q1: What is the main difference between RLHF and Constitutional AI?

RLHF adjusts AI using human feedback (reward model), while Constitutional AI defines “Constitution (rules)” and has AI self-critique and self-correct. The latter tends to have higher scalability and cost efficiency.

Q2: What happens if you violate the EU AI Act?

Fines of up to 35 million euros or 7% of worldwide annual turnover, whichever is higher, may be imposed (depending on the violation). The impact on business is significant, so early response is essential.

Q3: What should companies start with first?

First, establish a governance structure such as an “AI Ethics Committee,” and conduct risk assessments (bias, safety, regulations, etc.) in your company’s AI use.

Summary

Summary

- AI Safety is key to regulatory compliance and brand protection

- Implement safety with RLHF, Constitutional AI, DPO

- Bias mitigation, transparency, and continuous monitoring are important

- Compliance with regulations such as EU AI Act is a mandatory task for companies

AI Safety has evolved from a technical challenge to the core of business strategy. In 2025, responsible AI development is becoming a source of competitive advantage.

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize. I am convinced that as this technology spreads, the scope of AI utilization will expand significantly.

I have introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

References

Safe AI for a sustainable future

💡 Struggling with AI Agent Development or Implementation?

Reserve a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technical Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for In-house Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures

![Green AI Practice Guide - Sustainable AI Development Balancing Energy Efficiency and Cost Reduction [2025 Edition]](/images/posts/green-ai-practice-guide-2025-header.webp)