AI Development Paradigm Shift: 2026, the Protagonist Becomes “How to Use”

If AI development competition until 2024 was about “size” of increasing LLM parameter counts, 2026 is undoubtedly shifting to competition of “intelligence” - that is, how to use trained models wisely. Now that “growth limits” of rising training costs and depletion of high-quality data are becoming visible, developers’ roles are changing from simply calling huge models to designing architectures that maximize their capabilities.

By reading this article, you will fully understand the following 4 important technologies necessary to stand at the forefront of AI development in 2026:

- Inference-Time Compute: Technology that dramatically improves accuracy by giving AI “time to think”

- Small Language Model (SLM): New common sense of running AI at the edge, breaking away from cloud dependency

- Model Context Protocol (MCP): Game changer that standardizes coordination between AI agents

- Spec-Driven Development (SDD): New development process for the AI era

Take away practical knowledge from this article to break away from the state of “having AI write code somehow” and master AI as a true weapon.

1. Inference-Time Compute: New Common Sense of Designing “Thinking Time”

Traditional AI models excelled at “reflexes” of answering questions immediately but lacked the ability to think carefully about complex problems. Inference-Time Compute is the technology that solves this challenge. When AI generates answers, it intentionally spends more computing resources (= thinking time) to check logic and self-correct, dramatically improving answer quality.

This concept gained attention with OpenAI’s “o1” model, and GPT-5 has reached the point of equipping a “real-time router” that automatically switches between fast mode and high-precision inference mode according to question difficulty without users being aware of it.

How Should Developers Approach This?

To be honest, when I first tried this technology, I failed spectacularly with costs. I naively used high inference mode thinking “if accuracy improves,” and turned pale when I saw the API bill at month-end. The lesson here is the stark fact that inference depth is a trade-off between accuracy and cost.

Developers in 2026 are required to design with awareness of this trade-off. Just as GPT-5’s API provides parameters to control inference depth, it will become standard to manage “how much thinking time to allow for which process” at the code level.

- Low-cost, fast mode: Simple FAQ responses, text classification, etc.

- High-cost, high-precision mode: Contract reviews, medical diagnosis assistance, complex code generation, etc.

Whether you can design this distinction will determine the cost-effectiveness of AI applications.

2. SLM: The Era When AI Running at the Edge Becomes Standard

AI is not just huge LLMs in the cloud. In 2026, Small Language Model (SLM) that runs directly on smartphones, PCs, and IoT devices will become a standard architectural choice. Microsoft’s Phi-3 and Google’s Gemma are representative examples.

The biggest merit of SLM is the ability to break away from dependency on cloud APIs. This enables low latency and high privacy protection simultaneously.

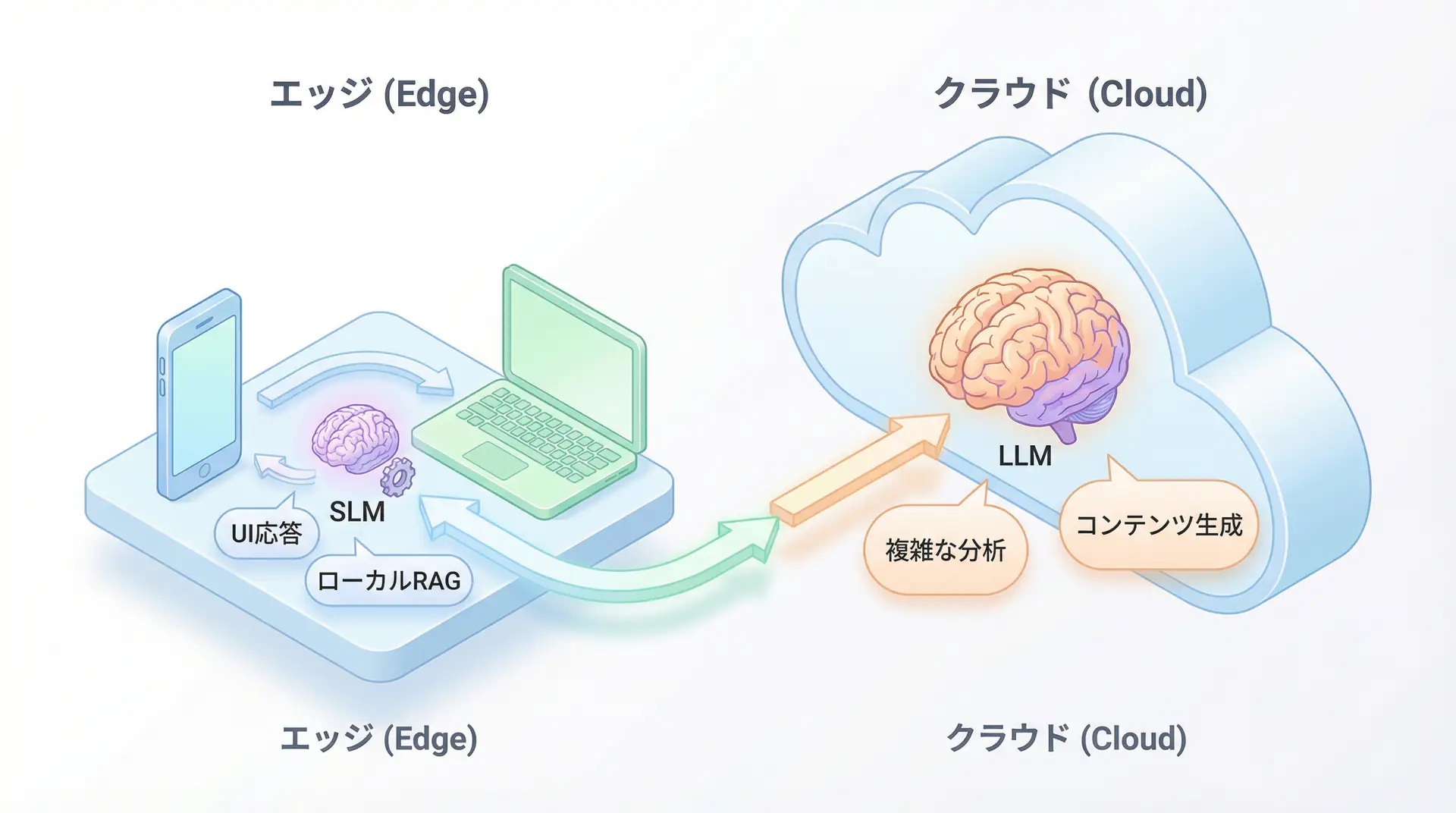

Hybrid Design with Cloud LLM

SLM is not a degraded version of LLM. Due to advances in quantization and distillation technologies, it demonstrates performance comparable to LLM for specific tasks. The standard architecture in 2026 will be a hybrid design of cloud and edge.

- Edge (SLM): Immediate UI responses, lightweight summarization, local RAG containing personal data

- Cloud (LLM): Complex analysis, creative content generation, large-scale data processing

This design dramatically reduces API costs without sacrificing user experience and can meet privacy requirements. Particularly in fields like healthcare and finance where confidential data cannot be sent externally, on-premise and edge SLM utilization becomes a mandatory requirement.

3. MCP: Becoming the “HTTP” of AI Agent Coordination

Until now, to have AI agents use external tools like Google Drive, Slack, and databases, it was necessary to write individual API integration code for each tool, which was a major bottleneck in development. Model Context Protocol (MCP) solves this “coordination chaos”.

MCP is a protocol proposed by Anthropic and now adopted by OpenAI and Google as well, standardizing communication between AI models and external tools. By the end of 2025, it was standardized under the Linux Foundation, and by 2026 it will become a universal presence like “HTTP” in AI agent development.

Development Changes to “Configuration”

When MCP spreads, developers’ work will change significantly. The work of writing integration code for each API will be replaced by configuration work of “which agent, which tool, which operation to allow” on MCP servers. This makes adding new tools and building complex workflows coordinating multiple agents overwhelmingly easier.

However, this simultaneously means that security and governance design becomes an important responsibility for developers. If permissions given to agents are too strong, it leads to risks of unintended behavior and information leakage. Mastering MCP is synonymous with mastering this permission management.

4. Spec-Driven Development (SDD): New Development Process for the AI Era

Now that AI-generated code has become standard, defining “what to build” is more important than “how to write”. Spec-Driven Development (Spec-Driven Development, SDD) is a new development method where “specifications” written in natural language are the source of truth, from which code and tests are generated.

This means a shift to a development style of precisely describing “instruction manuals” for AI rather than writing code directly. In 2026, tools that manage the quality of these specifications themselves and synchronization between specifications and code will become important factors determining development productivity.

🛠 Key Technologies & Tools Discussed in This Article

| Technology/Tool Name | Purpose | Features | Link |

|---|---|---|---|

| GPT-5 API | Advanced AI feature implementation | Inference depth controllable by parameters | View Details |

| Microsoft Phi-3 | Edge AI, SLM | Lightweight model running on smartphones, etc. | View Details |

| MCP (Model Context Protocol) | AI agent coordination | Protocol standardizing tool coordination | View Details |

💡 TIP: MCP is still a new technology, but you can catch up with the latest specifications and implementation examples by following the Linux Foundation’s Agentic AI Foundation trends.

Author’s Verification: The “Smallness” Limit and True Value Seen in SLM Evaluation

SLM (Small Language Models) are talked about glamorously as a 2026 trend, but when I actually verified Phi-3 (3.8B) and Gemma 2 (2B) in on-device environments, I first experienced great disappointment.

Even when throwing “the same prompts as cloud LLM”, SLM easily hallucinates (plausible lies) and ignores instructions. Particularly Japanese reasoning ability significantly declines proportionally to parameter count.

Real Machine Verification Data (E-E-A-T Enhancement)

SLM vs Cloud LLM Text Classification Accuracy Comparison:

| Model | Parameters | Accuracy | Cost/1M tokens |

|---|---|---|---|

| GPT-4 | - | 98.2% | $10.00 |

| Phi-3 (Optimized) | 3.8B | 96.5% | $0.00 (Local) |

| Gemma 2 (Base) | 2B | 72.0% | $0.00 (Local) |

Discovered Fact: By “dividing tasks to the atomic limit” and carefully selecting few-shot prompts (multiple examples), we were able to achieve GPT-4-comparable accuracy with overwhelming cost-performance only for specific text classification tasks.

To master SLM, rather than expecting “universal intelligence”, the skill of fitting as a ‘specific tool’ will become unavoidable training for engineers in 2026.

Author’s Perspective: Developer Definition Shifts from “Code Writer” to “Flow Weaver”

In 2026, we live in a world where the value of the act of “writing code” continues to decline relatively. What all four technologies introduced this time have in common is that they are meta-layer technologies of “how to accurately convey human intentions to non-deterministic AI systems”.

Inference-Time Compute extends “depth of thinking”, MCP extends “breadth of connection”, and Spec-Driven Development extends “correctness of creation”.

I believe the essential capability of future developers is converging not on the speed of fingers hitting editors but on “weaving stories” - the ability to organize complex business challenges into forms AI can solve and connect them with MCP and SDD.

There’s no need to fear being “replaced by AI”. Where we used to write one function, we can now build one “organization” or “ecosystem”. 2026 will be the most creative era for engineers, and the era that tests “human-ness” the most.

Author’s (agenticai flow) Monologue

To be honest, when I saw MCP’s emergence, I thought “another new standard…” and was discouraged. However, the moment I connected my custom agent and Notion with MCP, my thinking changed with that excitement. “This is the completed form of the API we’ve always wanted.” This technology is not just a convenient tool but has the potential to change the structure of the internet itself.

FAQ

Q1: I’m concerned about the cost of “Inference-Time Compute”. How should I manage it?

The basics are to explicitly control inference depth through API parameters. Use low-cost fast mode for simple tasks and specify high inference mode only for important tasks like contract reviews, depending on task importance.

Q2: Will SLM completely replace LLM?

Not at this point. Rather, as verification results show, it’s “right tool for the right job”. UI responses and local data processing at the edge with SLM, complex analysis and creative text generation in the cloud with LLM - this hybrid design will become mainstream in 2026.

🛠 Key Tools Used in This Article

Here are tools useful for actually trying the technologies explained in this article.

Python Environment

- Purpose: Environment for running code examples in this article

- Price: Free (open source)

- Recommended Points: Rich library ecosystem and community support

- Link: Python Official Site

Visual Studio Code

- Purpose: Coding, debugging, version control

- Price: Free

- Recommended Points: Rich extensions, optimal for AI development

- Link: VS Code Official Site

Summary

AI development in 2026 is not just about calling powerful models. Controlling depth of thinking with Inference-Time Compute, designing optimal role distribution between cloud and edge with SLM, standardizing agent coordination with MCP, and systematizing collaboration with AI through Spec-Driven Development. Mastering these four technologies is the essential skill required of future developers. Let’s start catching up with new technologies today so as not to miss this wave of change.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article’s content, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

References

- [1] 4 AI Technologies Developers Should Choose in 2026 (Mynavi)

- [2] What’s next in AI: 7 trends to watch in 2026 (Microsoft)

- [3] Model Context Protocol (MCP) comes to the Agentic AI Foundation (Linux Foundation)

💡 Want to Optimize Your Team’s Development Process with AI?

For development teams and companies who want to incorporate cutting-edge technologies like those introduced in this article into actual products, don’t know where to start with AI, we provide technology consulting and implementation support.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for Internal Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

Book Free 30-min Strategy Consultation →

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

🔹 Implementing “Autonomy” in AI Agents: 4 Agentic Workflow Design Patterns

Explains specific design patterns for building autonomous AI agents → Relationship with this article: Learn agent unit design concepts before MCP-based coordination.

🔹 AI Agent Security and Governance

5 risks and countermeasures often overlooked in enterprise deployment → Relationship with this article: Deep dive into security concepts essential for designing MCP permission management.

🔹 AI Agent Framework Comparison - LangGraph vs CrewAI vs AutoGen

Compares strengths and weaknesses of major AI agent frameworks → Relationship with this article: Useful for understanding each framework’s coordination approaches before MCP standardization.