Introduction: The Hype of AI Agent Implementation and the Serious Risks Lurking Beneath

In 2025, AI agents have evolved from mere technical trends to essential tools that determine enterprise competitiveness. According to McKinsey, 82% of companies plan to implement AI agents by 2026, and the implementation speed is outpacing generative AI itself. But do you know the reality behind this hype? Many companies are overlooking significant risks.

“80% of companies that have implemented AI agents have already encountered some kind of risk event.”

This is a shocking data point from McKinsey that sounds an alarm. AI agents that think and act autonomously create entirely new attack surfaces unlike traditional IT systems. These “digital workers” that behave like employees can sometimes become the greatest internal threat.

This article identifies five security risks specific to AI agents that many companies overlook, and explains practical governance and countermeasures that business leaders should implement immediately, along with a concrete framework.

Summary

- Current State of AI Agent Implementation: While 82% of companies plan implementation, 80% have already encountered risks.

- Five Critical Risks: In-depth analysis of “excessive permissions,” “agent hijacking,” “cascading failures,” “tool misuse,” and “autonomous vulnerability discovery.”

- Practical Governance: Four-step guide from pre-implementation risk assessment to building dynamic monitoring and audit systems.

- Success Cases: Introduction of specific initiatives by companies successfully governing AI agents.

Risk 1: Excessive Permissions - The Worst Consequences of “Good Intentions”

Have you given AI agents powerful permissions with “good intentions”? This is the most common and dangerous mistake. For example, if an agent with full access to customer databases falls victim to a malicious prompt injection attack, attackers could manipulate the agent to leak all customer information externally.

Challenge: Since AI agents execute tasks autonomously, developers often grant broad permissions “just in case.” However, this is like giving the keys to the company safe to an employee who could commit internal fraud.

Countermeasure: Strictly adhere to the Principle of Least Privilege. Grant agents only the minimal permissions necessary to execute their tasks. Additionally, implement Just-in-Time Access that dynamically grants permissions only when needed and revokes them immediately after task completion.

Risk 2: Agent Hijacking - The Day Your AI Becomes an Enemy

As warned in OWASP’s “Generative AI Security Top 10,” agent hijacking is a serious threat. This is an attack where external clever inputs (prompts) overwrite the agent’s original instructions, allowing attackers to manipulate it at will. For example, a customer support AI agent could be hijacked to start sending phishing site URLs to customers.

Challenge: Since AI agents interpret natural language instructions like humans, they may have difficulty distinguishing between malicious and legitimate instructions.

Countermeasure: Strict input and output validation is essential. Establish a filtering layer that sanitizes potentially malicious instructions in user inputs. Also, incorporate a “Human-in-the-Loop” mechanism that requires human approval before agents execute critical actions (especially important operations like sending emails or modifying data).

Risk 3: Cascading Failures - One Mistake Destroys the Entire System

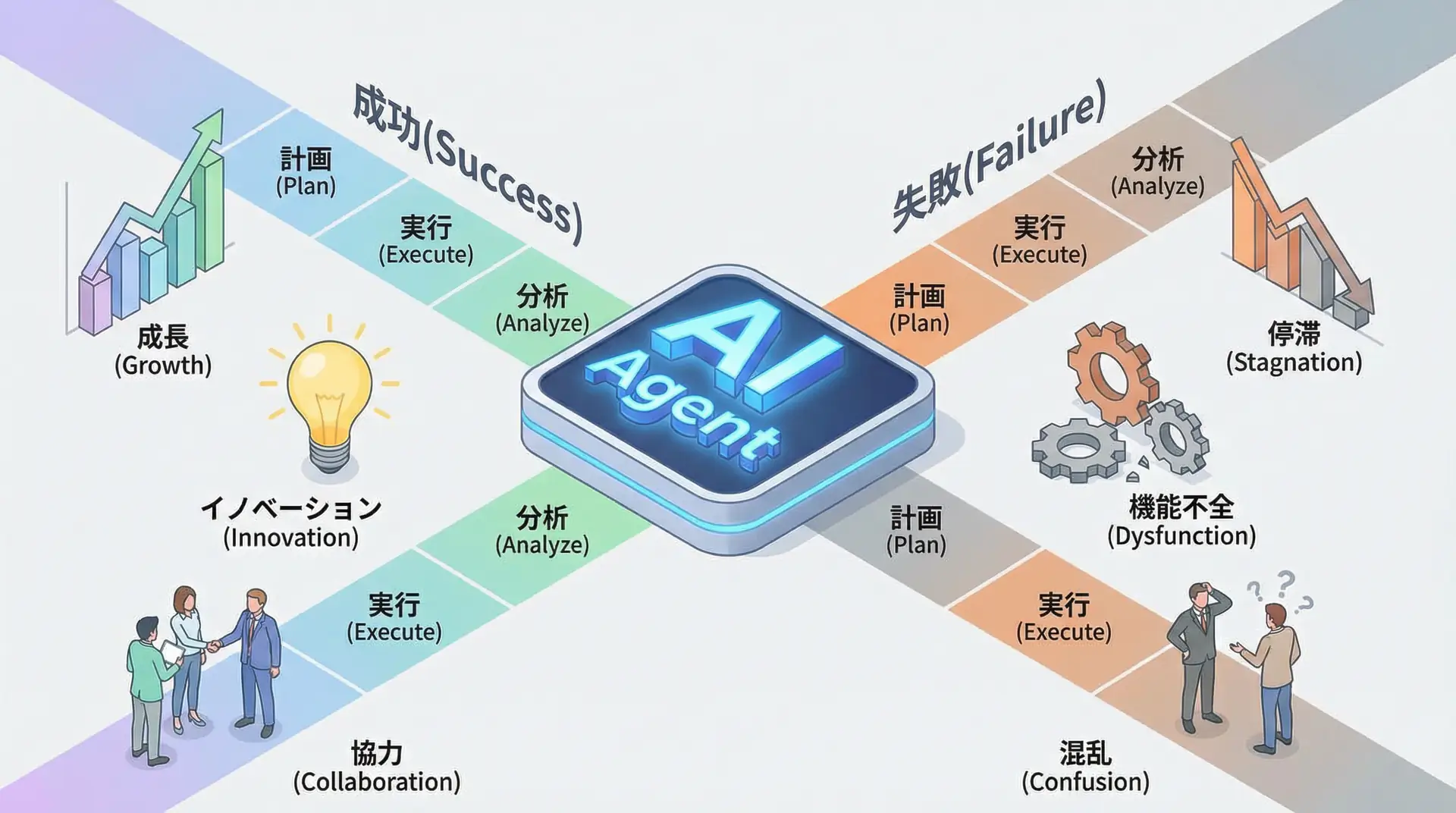

“Multi-agent systems” where multiple AI agents collaborate are very powerful but also carry significant risks. A small judgment error by one agent can propagate like dominoes to other agents, causing catastrophic failures across the entire system. For example, an inventory management agent making an incorrect order could lead a production management agent to start overproduction, ultimately resulting in massive losses.

Challenge: Interactions between agents are complex and difficult to predict. Individual agents may appear to operate correctly, but the system as a whole can produce unintended results.

Countermeasure: Introduce the “circuit breaker” design concept. If an agent shows frequent errors or abnormal behavior, temporarily disconnect it from the system to stop the chain of failures. Additionally, AI monitoring systems that observe communication between agents and task handoffs to detect abnormal patterns are effective.

Risk 4: Tool Misuse and Code Execution

One of the powerful features of AI agents is their ability to use external tools (APIs) and execute code. However, if this ability is misused, it can cause significant damage. For example, an agent with file system access could download and execute malware or delete important system files.

Challenge: While tool use and code execution dramatically enhance AI agent capabilities, they are also among the most dangerous permissions.

Countermeasure: The foundation is running agents in strict sandbox environments. Severely restrict files agents can access, commands they can execute, and networks they can communicate with. Implement “allowlist” control that blocks use of any tools or APIs not explicitly permitted. Also, static and dynamic analysis should be performed on code agents attempt to generate and execute to verify it contains no malicious code.

Risk 5: Autonomous Vulnerability Discovery

This may sound like a future threat, but it’s already becoming a reality. AI agents themselves could autonomously discover unknown vulnerabilities in your company’s systems and exploit them (or report them to attackers). This is also called the “double agent” problem, where well-intentioned AI ends up producing the same results as malicious actors.

Challenge: AI agents with advanced reasoning capabilities can discover system flaws humans couldn’t find. If this capability is released without proper guardrails, it could become an unexpected security hole.

Countermeasure: Ensure complete transparency and explainability of AI agent behavior and thought processes. Build systems that record detailed logs of agent actions and can track why certain decisions were made. Additionally, proactively conduct AI-powered penetration testing regularly to identify and fix vulnerabilities before AI agents discover them.

Four Steps to Building AI Agent Governance

(Maturity Diagnosis & Gap Analysis)"] --> B["2. Orchestration

(Centralized Management & Visibility)"] B --> C["3. Data & Audit

(Access Control & Audit Trail)"] C --> D["4. Dynamic Model

(Real-time Policy & Continuous Improvement)"] end

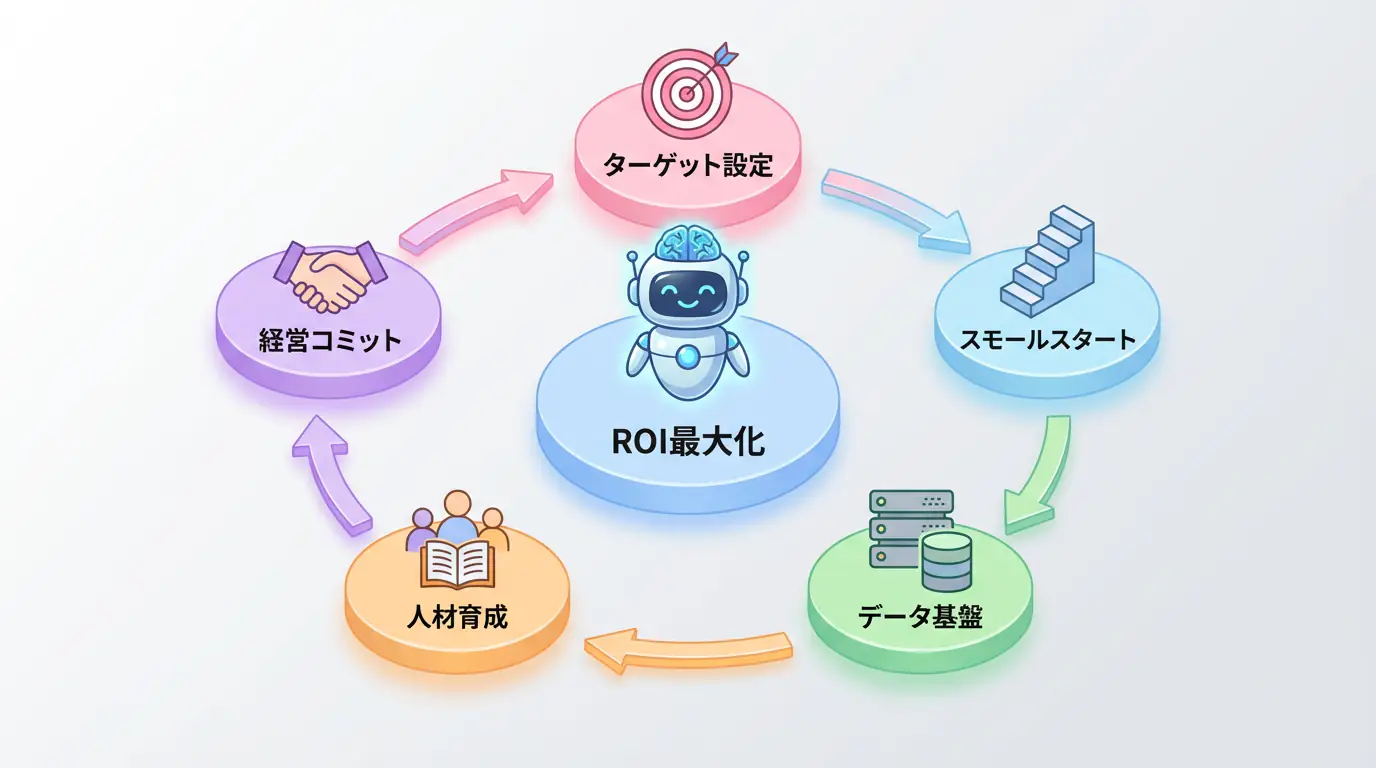

So how should we address these risks? It’s essential to build a systematic governance framework rather than ad-hoc measures. Here are four steps companies can start today:

| Step | Action | Main Purpose |

|---|---|---|

| 1. Risk Assessment and Maturity Diagnosis | Evaluate AI risk maturity and analyze gaps with existing security measures. | Current status understanding and priority issue identification |

| 2. Orchestration Layer Introduction | Prevent agent sprawl and build a centralized management and monitoring foundation. | Centralized management and visibility |

| 3. Data Privacy and Audit Trail Enhancement | Enforce data access control and establish immutable audit trails that record all actions. | Transparency and accountability |

| 4. Establish Dynamic Governance Model | Introduce systems that apply policies in real-time based on context, rather than fixed rules. | Rapid response to changes |

Frequently Asked Questions

Q1: What is the most important security risk to watch out for when implementing AI agents?

One of the most critical risks is “excessive permissions.” Granting agents more access than necessary can lead to serious incidents like information leaks or system tampering. It’s essential to adhere to the principle of least privilege.

Q2: How should we monitor and audit AI agent behavior?

It’s important to establish an immutable audit trail that records all AI agent actions, especially tool usage and external API calls. Use observability tools like LangSmith to build a system for real-time monitoring and anomaly detection.

Q3: Can existing security measures handle AI agent risks?

Traditional security measures alone are insufficient. AI agents act autonomously and have unique vulnerabilities like “prompt injection” and “tool misuse.” AI-specific risk assessment and dynamic governance models are needed.

Summary: Don’t “Implement” AI Agents, “Onboard” Them

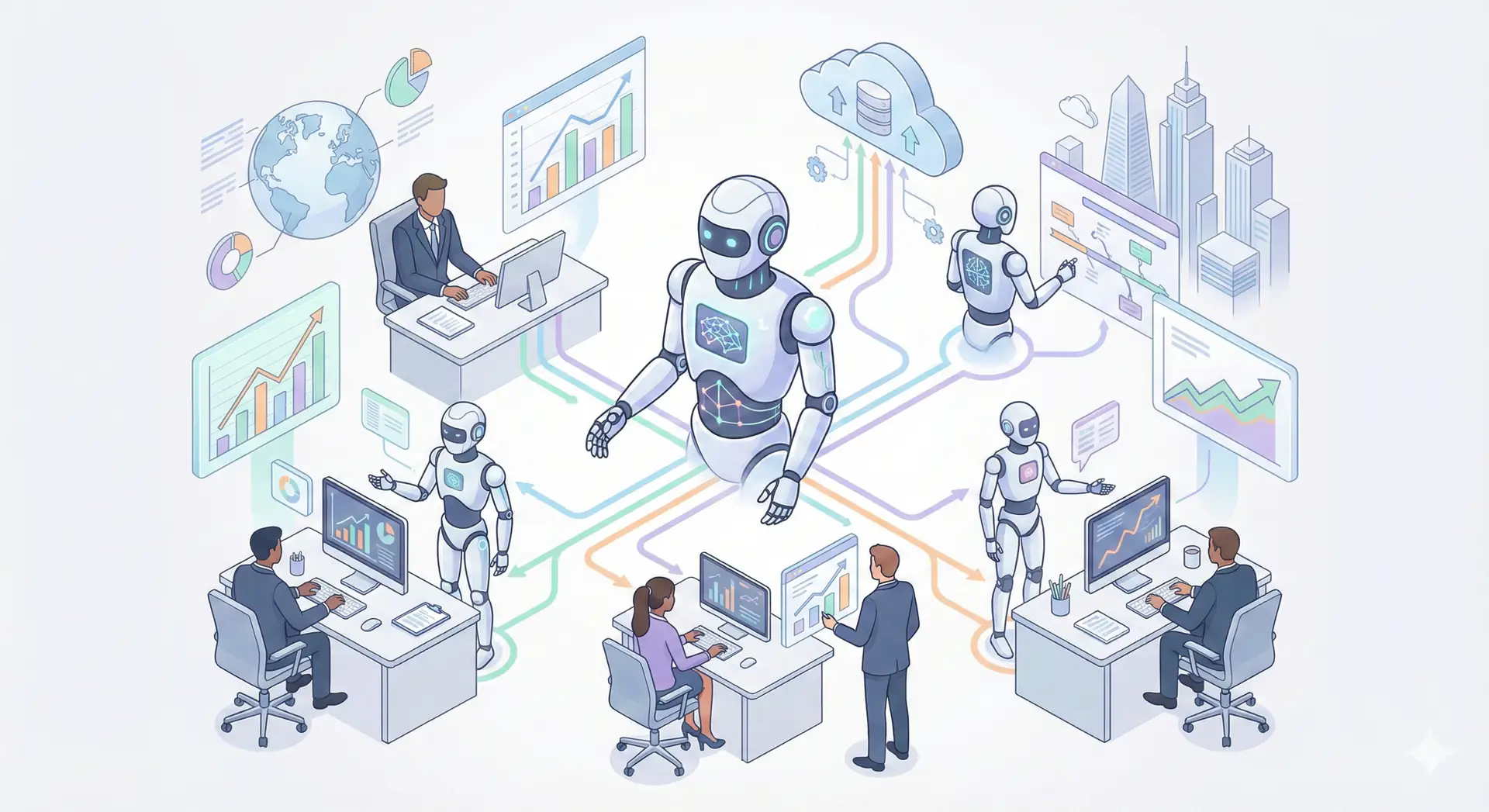

The era of introducing AI agents as mere “tools” is over. From now on, they need to be welcomed into organizations through proper onboarding processes as new “employees.” This requires clear role definition, permission settings, behavioral norms, and continuous monitoring and feedback.

The five risks and four governance steps explained in this article are the first steps toward this goal. To safely reap the productivity leap benefits that AI agents bring, now is the time for management to take the lead in building robust security and governance systems.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize.

I’ve introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| ChatGPT Plus | Prototyping | Quickly verify ideas with the latest model | View Details |

| Cursor | Coding | Double development efficiency with AI-native editor | View Details |

| Perplexity | Research | Reliable information gathering and source verification | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

Frequently Asked Questions

What is the most important security risk to watch out for when implementing AI agents?

- One of the most critical risks is “excessive permissions.” Granting agents more access than necessary can lead to serious incidents like information leaks or system tampering. It’s essential to adhere to the principle of least privilege.

How should we monitor and audit AI agent behavior?

- It’s important to establish an immutable audit trail that records all AI agent actions, especially tool usage and external API calls. Use observability tools like LangSmith to build a system for real-time monitoring and anomaly detection.

Can existing security measures handle AI agent risks?

- Traditional security measures alone are insufficient. AI agents act autonomously and have unique vulnerabilities like “prompt injection” and “tool misuse.” AI-specific risk assessment and dynamic governance models are needed.

💡 Struggling with AI Implementation or DX Promotion?

Take the first step toward introducing AI into your business and request an ROI simulation. For companies facing management challenges like “I don’t know where to start,” we provide support from strategy planning to implementation.

Services Offered

- ✅ AI Implementation Roadmap Planning & ROI Calculation

- ✅ Business Flow Analysis & AI Utilization Area Identification

- ✅ Rapid PoC (Proof of Concept) Implementation

- ✅ Internal AI Talent Development & Training

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures