Why Do 80% of AI Agents End at PoC?

In 2025, AI agent development has shifted from a technical challenge to a practical phase of creating business value. However, many projects stall at the proof-of-concept (PoC) stage and fail to reach production. Why is this?

LangChain’s recently published “State of AI Agent Engineering” survey hits the core of this issue. The biggest challenge developers face is “difficulty in debugging (28%),” followed by “latency (20%).” This aligns very well with my own development experience.

Shocking Survey Results About half of AI agent developers face debugging and performance issues. This suggests that new engineering challenges are emerging beyond simply writing code.

Based on these survey results and my practical experience, this article thoroughly explains 7 fatal pitfalls that many developers fall into and practical strategies to avoid them, with specific tools and code examples. After reading this article, you should be able to significantly increase the success rate of your AI agent development.

Pitfall 1: Unclear Use Cases from “Just Try Building It”

The most common failure is starting from “what it can do” rather than “what to build.” As Kore.ai points out, the lack of clear use cases is the biggest cause of project failure.

Vague goals like “automate customer support” will quickly lead agents astray. Instead, you need to narrow down to specific, measurable challenges like “automate primary response for return requests within 30 days of purchase for unused products, reducing operator response time by 20%.”

TIP Problem-Solving Framework

- Problem: What specific business challenge do you want to solve?

- Solution: How will the AI agent solve this challenge?

- Metric: How will you measure success? (e.g., processing time, cost, customer satisfaction)

Ideas that don’t fit this framework may be premature.

Pitfall 2: “Garbage In, Garbage Out” Data Quality Issues

Especially for RAG (Retrieval-Augmented Generation) based agents, data quality directly affects agent thinking quality. If you give outdated documents, inaccurate information, or inconsistently formatted data as a knowledge base, the agent will confidently generate wrong answers. It’s like having a cheat sheet that’s wrong.

Solutions:

- Thorough data cleansing: Build processes to regularly review knowledge sources and keep them up-to-date and accurate.

- Optimize chunking strategy: Divide information into appropriate sizes (chunking) and adjust according to embedding model characteristics.

- Introduce hybrid search: Combine keyword search and vector search to improve search accuracy.

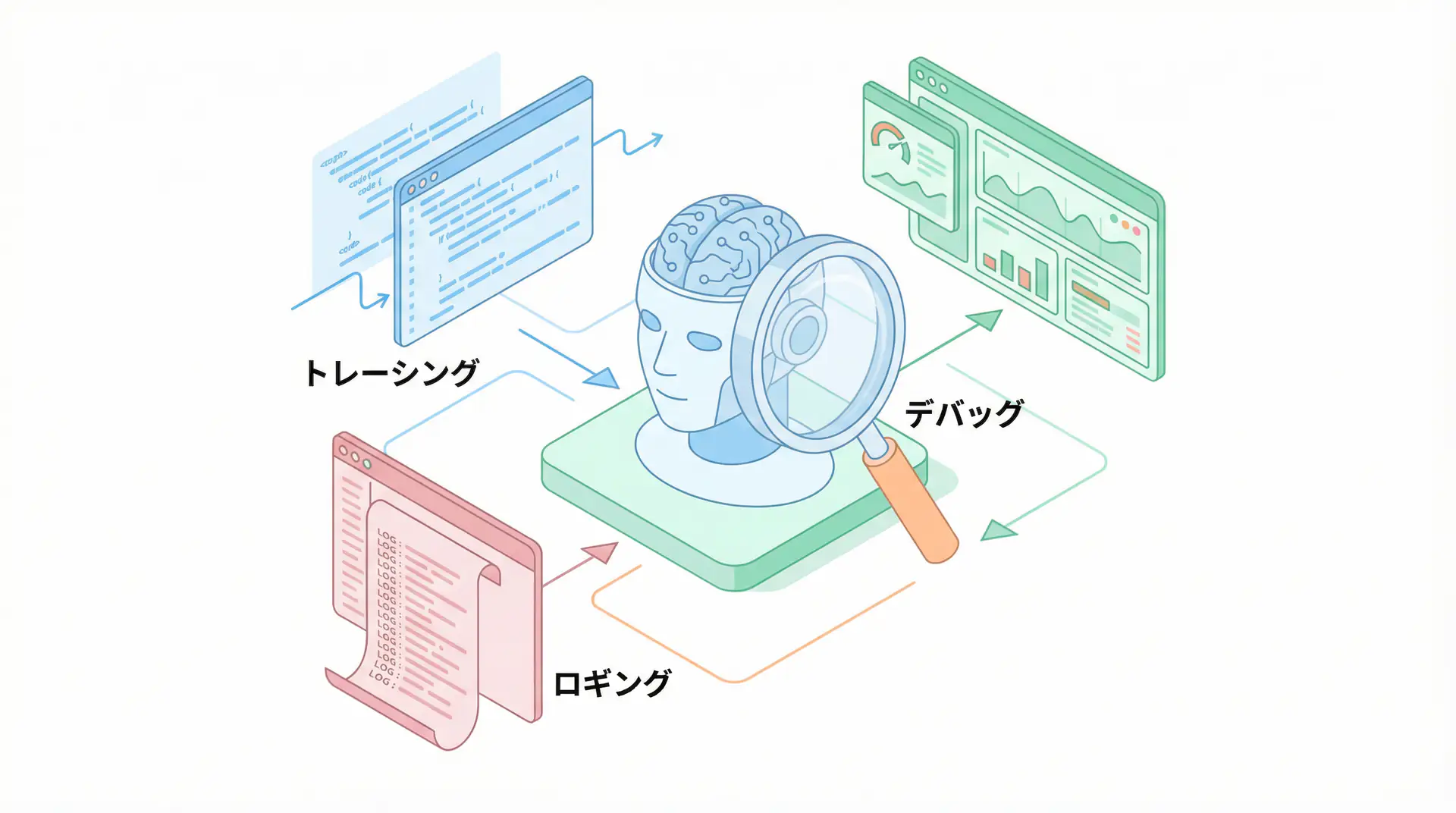

Pitfall 3: “Black Box” Agent Debugging Hell

As survey results show, debugging is the biggest challenge. In addition to the non-deterministic behavior of LLMs, problem identification is extremely difficult because multiple tool calls and thinking processes chain together.

Honestly, tracking complex agents with just print() debugging is impossible. This is where tools that ensure traceability become the savior.

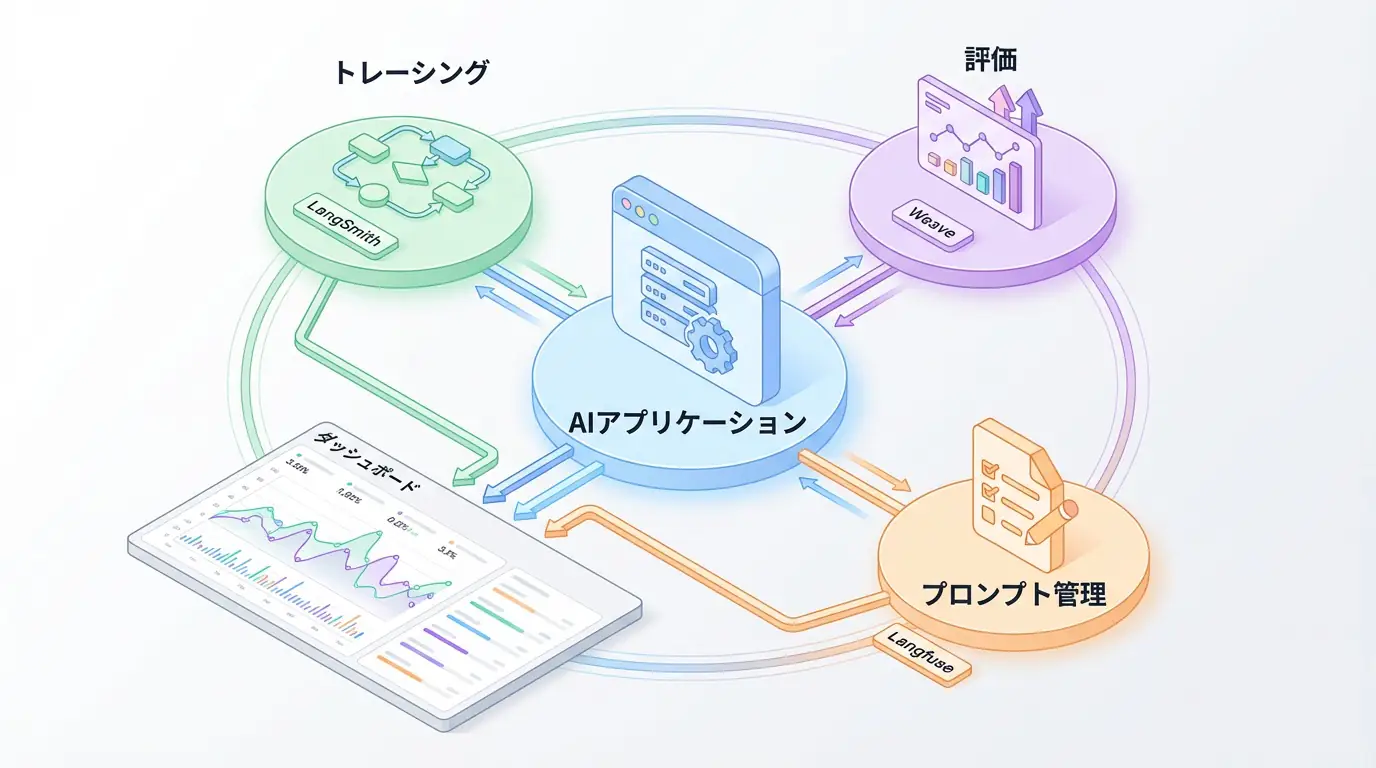

LLMOps platforms like LangSmith and Langfuse visualize the flow of agent thinking processes, tool inputs, and API outputs. This allows you to trace step-by-step “why the agent reached this conclusion.”

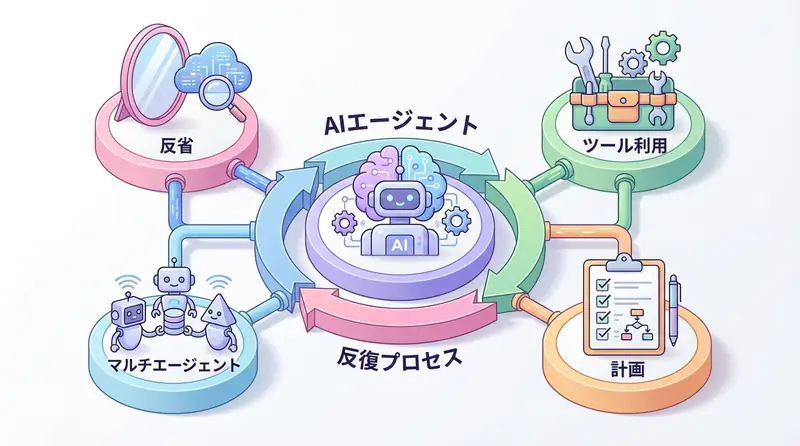

Pitfall 4: Ignoring Scalability by Dreaming of a “Perfect Agent”

Trying to build a perfect agent with all features from the start will always fail. Starting small and iteratively improving with an agile approach is the key to success.

Solutions:

- Define MVP (Minimum Viable Product): Develop an agent focused on the most important core functionality.

- Closed testing: First test with limited users like the development team, collect feedback.

- Continuous improvement: Repeatedly add and improve features based on collected feedback.

By running this cycle, you can nurture an agent that meets users’ true needs while minimizing risk.

Pitfall 5: “Too Slow to Use” Latency Issues

Especially in situations requiring real-time interaction like customer support, agent response speed (latency) becomes a fatal issue. Users feel significant stress even with delays of a few seconds.

Solutions:

- Optimize model size: Consider using smaller, faster models specialized for specific tasks (e.g., GPT-4.1-mini, Gemini 2.5 Flash) instead of high-performance models like GPT-4.

- Optimize inference: Use libraries like vLLM or TensorRT-LLM to speed up the inference process.

- Streaming responses: Implement streaming to present generated parts to users sequentially rather than waiting for complete answers.

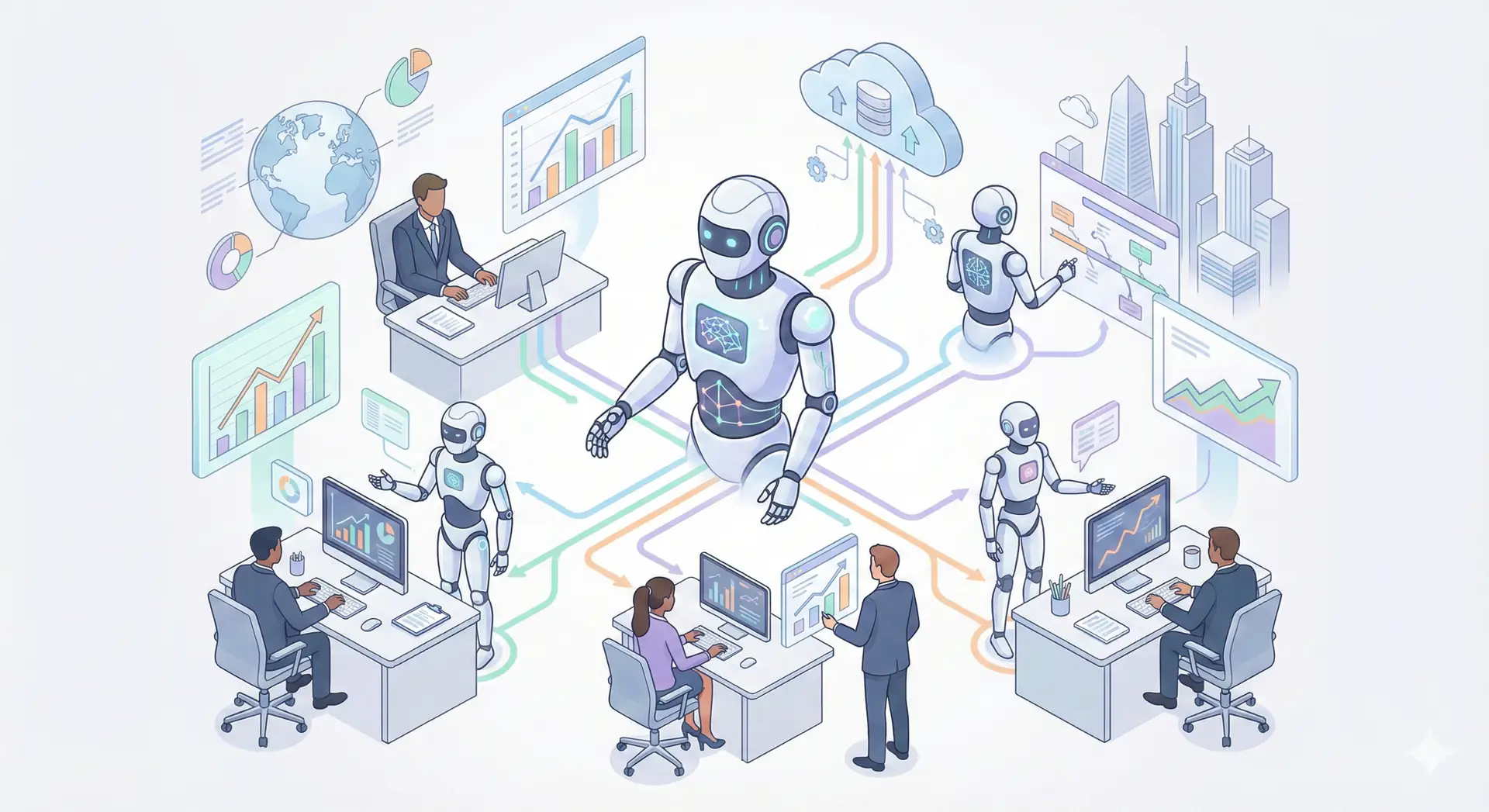

Pitfall 6: “Runaway Agent” Lack of Security and Governance

When giving AI agents permissions like database updates or external API execution, security and governance design is essential. There is always a risk of agents going rogue due to malicious prompts (Prompt Injection) or unintended operations.

Solutions:

- Introduce approval workflows: Always include human approval steps before important operations (e.g., sending emails to customers, DB updates).

- Minimize permissions: Limit permissions given to agents to the minimum necessary for task execution.

- Set guardrails: Implement guardrails to detect and block inappropriate requests.

Pitfall 7: “Build and Forget” Lack of Evaluation and Monitoring

To determine if a developed agent is functioning as expected, continuous evaluation and monitoring are essential.

Solutions:

- Build evaluation datasets: Create evaluation datasets including typical use cases and edge cases.

- Multi-faceted evaluation metrics: Measure performance using multiple metrics including not just accuracy, but cost, latency, and user feedback.

- Automate evaluation: Use platforms like Langfuse to automate evaluation processes and immediately detect performance degradation.

Implementation Example: Starting Debugging and Evaluation with Langfuse

With Langfuse, you can easily record agent execution traces by adding just a few lines to your Python code. Seeing is believing, let’s look at a simple code example.

import os

from langfuse import Langfuse

from langfuse.decorators import observe

# Set API keys from environment variables

# os.environ["LANGFUSE_PUBLIC_KEY"] = "pk-lf-..."

# os.environ["LANGFUSE_SECRET_KEY"] = "sk-lf-..."

# os.environ["LANGFUSE_HOST"] = "https://cloud.langfuse.com"

langfuse = Langfuse()

@observe()

def process_query(query: str):

# Execute agent processing here

# Example: RAG pipeline, tool calls, etc.

retrieved_docs = retrieve_documents(query)

response = generate_response(query, retrieved_docs)

return response

@observe()

def retrieve_documents(query: str) -> list:

# Dummy document search process

print(f"Searching documents for: {query}")

return ["Doc1: AI agent development is difficult", "Doc2: Langfuse is useful"]

@observe()

def generate_response(query: str, docs: list) -> str:

# Dummy LLM call

print(f"Generating response for: {query} with docs: {docs}")

return f"Answer for '{query}'. Related info: {', '.join(docs)}"

# Execution traces are recorded in Langfuse

if __name__ == "__main__":

final_answer = process_query("How to debug AI agents")

print(f"Final answer: {final_answer}")

# Shutdown and send all traces

langfuse.flush()When you run this code, the series of processes from process_query to retrieve_documents and generate_response are visualized in Langfuse’s UI, allowing detailed analysis of inputs, outputs, and execution times at each step.

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| LangChain | Agent development | De facto standard for LLM application construction | View Details |

| LangSmith | Debugging & monitoring | Visualize and track agent behavior | View Details |

| Dify | No-code development | Create and operate AI apps with intuitive UI | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

Frequently Asked Questions

Q1: What is the most important thing in AI agent development?

Defining clear use cases and taking an iterative approach starting with small-scale PoC is most important. Rather than aiming for perfection from the start, continuously evaluating and improving is key to success.

Q2: Why is debugging agents difficult?

Due to the non-deterministic behavior of LLMs and complex execution paths where multiple tools and API calls chain together. It’s essential to introduce traceability tools like LangSmith to visualize inputs and outputs at each step.

Q3: How should I evaluate the performance of developed agents?

Evaluate using multiple metrics including not just accuracy, but also latency, cost, and user satisfaction. Use evaluation platforms like Langfuse, incorporate A/B testing and human feedback, and monitor continuously.

Frequently Asked Questions (FAQ)

Q1: What is the most important thing in AI agent development?

Defining clear use cases and taking an iterative approach starting with small-scale PoC is most important. Rather than aiming for perfection from the start, continuously evaluating and improving is key to success.

Q2: Why is debugging agents difficult?

Due to the non-deterministic behavior of LLMs and complex execution paths where multiple tools and API calls chain together. It’s essential to introduce traceability tools like LangSmith to visualize inputs and outputs at each step.

Q3: How should I evaluate the performance of developed agents?

Evaluate using multiple metrics including not just accuracy, but also latency, cost, and user satisfaction. Use evaluation platforms like Langfuse, incorporate A/B testing and human feedback, and monitor continuously.

Summary

Success in AI agent development depends not only on technical skills but also on strategic approaches. Just being aware of the 7 pitfalls introduced here can significantly reduce project failure risk.

Checklist for Success

- Is the use case specific and measurable?

- Is there a process to ensure data quality?

- Is traceability for debugging secured? (LangSmith/Langfuse)

- Is it an iterative development plan starting from MVP?

- Is latency within acceptable range?

- Are security and governance considered?

- Is there a mechanism for continuous evaluation and monitoring?

AI agents have the potential to fundamentally change the way we work. Let’s wisely avoid these pitfalls and release valuable agents to the world.

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize. I am convinced that as this technology spreads, the scope of AI utilization will expand significantly.

I have introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

References

- [1] State of AI Agent Engineering - LangChain Blog

- [2] Navigating the dangers and pitfalls of AI agent development - Kore.ai

- [3] Langfuse Documentation

💡 Struggling with AI Agent Development or Implementation?

Reserve a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technical Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for In-house Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures