In October 2024, Anthropic announced " Computer Use " as a new feature of Claude 3.5 Sonnet. This is a feature where the AI model can operate any application by looking at the computer screen (screenshot), moving the mouse, and typing on the keyboard just like humans.

Until now, automation by AI mainly involved API integration (Model Context Protocol, etc.), but with the emergence of Computer Use, legacy systems without APIs and websites where GUI operation is essential have also become targets for automation.

In this article, we deeply explain the technical mechanism, implementation methods, and differentiation from existing automation methods for engineers.

1. What is Computer Use?

Computer Use is giving LLMs “tools (Actions) for operating computers.” Specifically, it consists of the following three elements.

- Vision Capability: AI receives screenshots of the screen and recognizes the position and state of UI elements (buttons, input forms, menus).

- Action Capability: Based on recognized information, AI issues low-level operation commands such as “mouse movement,” “click,” “key input,” and “scroll.”

- Reasoning & Planning: Decomposes high-level instructions such as “Search for products on Amazon and compare prices” into specific operation procedures, and performs self-correction (Retry) when errors occur.

Differences from Traditional Automation

| Feature | API Integration (MCP, etc.) | Computer Use (GUI Operation) |

|---|---|---|

| Operation Target | Backend, DB, API | Frontend, UI |

| Reliability | High (structured data) | Variable (vulnerable to UI changes) |

| Applicable Range | Limited to API-public systems | All GUI apps and websites |

| Speed | Fast | Equivalent to human operation speed (slow) |

Computer Use is not a replacement for APIs but is appropriately positioned as a technology that complements the “last mile” operations that APIs cannot reach.

2. Architecture and Operation Flow

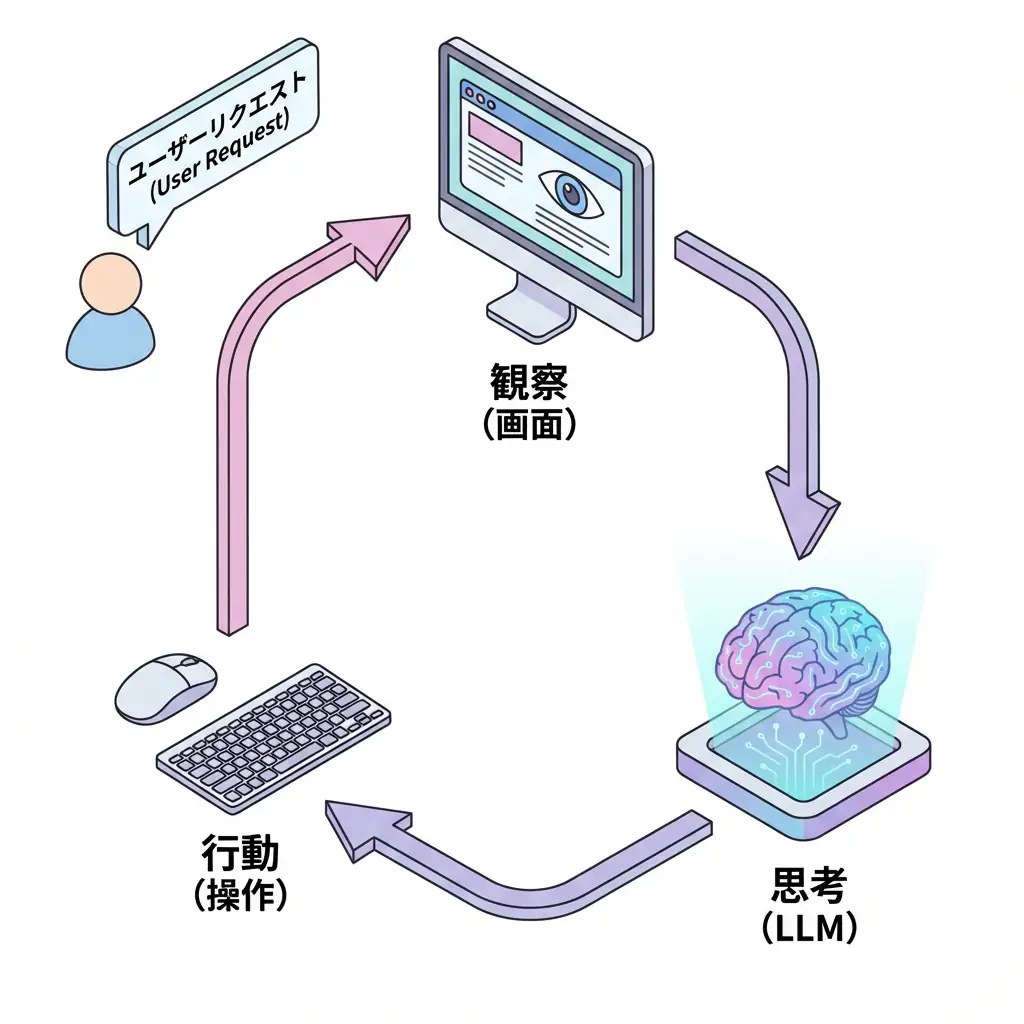

Computer Use implementation operates with the following “Observe → Reason → Act” loop (ReAct pattern).

- User Request: User instructs a task (example: “Search for flight information”).

- Environment State: Gets current screen (screenshot) and cursor position.

- LLM Reasoning: Claude analyzes the screen and decides the next operation to perform (example: “Click search box”).

- Tool Execution: Executes the decided operation via OS or browser control library (Puppeteer/Playwright).

- Feedback: Feeds back the operation result (screen change) to LLM again.

This loop is repeated until task completion.

3. Implementation Guide: Anthropic API and Puppeteer Integration

To implement Computer Use, use Anthropic API’s messages endpoint and utilize the new computer-use-2024-10-22 beta feature.

Below is a basic implementation image using Python SDK.

3.1. Tool Definition

First, define the “computer operation tools” that Claude will use.

computer_tool = {

"name": "computer",

"type": "computer_20241022",

"display_width_px": 1024,

"display_height_px": 768,

"display_number": 1,

}3.2. Sending API Requests

import anthropic

client = anthropic.Anthropic()

response = client.beta.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

tools=[computer_tool],

messages=[

{

"role": "user",

"content": "Search for 'Anthropic Computer Use' on Google."

}

],

betas=["computer-use-2024-10-22"],

)

# Check response (tool use request) from model

print(response.content)In response to this request, Claude returns a tool_use block like the following.

{

"type": "tool_use",

"id": "toolu_01...",

"name": "computer",

"input": {

"action": "type",

"text": "Anthropic Computer Use"

}

}3.3. Tool Execution and Result Feedback

Developers need to receive this tool_use, execute actions on the actual environment (e.g., browser launched with Puppeteer), and return the results (new screenshots) to Claude.

TIP Anthropic provides an Ubuntu environment running in a Docker container as a reference implementation. It’s easiest to start by trying this.

4. Security and Risk Management

Computer Use is powerful but also carries significant risks. AI could send emails on its own or delete cloud resources.

WARNING Execution in sandbox environment is mandatory Direct execution of Computer Use on internet-connected host machines is very dangerous. Always execute in isolated environments such as Docker containers or virtual machines (VM).

Recommended Security Measures

- Human Approval (Human-in-the-loop): Always include a process to ask for human permission before important operations (purchase, deletion, sending).

- Minimize Permissions: Grant only minimum necessary permissions to accounts that agents operate.

- Domain Restrictions: For browser operations, restrict accessible domains with whitelists.

5. Business Applications and Future

Computer Use is expected to be utilized in the following operations:

- Legacy System Migration: Data extraction and input automation from old business systems without APIs.

- QA Test Automation: Flexible E2E tests for application UI changes.

- Complex Investigation Tasks: Tasks that span multiple websites to collect information and compile reports.

By combining API integration (MCP) and Computer Use (GUI operation), truly autonomous AI agents are becoming a reality.

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| LangChain | Agent Development | De facto standard for LLM application construction | View Details |

| LangSmith | Debugging & Monitoring | Visualize and track agent behavior | View Details |

| Dify | No-code Development | Create and operate AI apps with intuitive UI | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

FAQ

Q1: What is the difference between Computer Use and traditional API integration (MCP, etc.)?

While API integration performs backend system-to-system communication, Computer Use operates by looking at GUI screens like humans. The biggest feature is that even legacy systems and websites without APIs can be automation targets.

Q2: Are there security risks?

Due to having very powerful permissions, there are risks of misoperation or misuse. Direct execution on internet-connected host environments should be avoided, and execution in isolated environments (sandboxes) such as Docker is mandatory.

Q3: What use cases is it suitable for?

It is suitable for data migration from legacy systems without APIs, investigation of sites with frequently changing UIs, and E2E test automation. However, speed tends to be slower than APIs.

Summary

Summary

- Computer Use is a technology where LLMs control GUI applications through vision and operation.

- Enables automation even of systems without APIs, but execution speed and reliability may be inferior to APIs.

- Due to high security risks, execution in sandbox environments and Human-in-the-loop are essential.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article’s content, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning costs and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize. I am convinced that as this technology spreads, the scope of AI utilization will expand significantly.

I have introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

💡 Struggling with AI Agent Development or Implementation?

Book a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for Internal Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures