Introduction: Breaking Through the “Wall” of Model Performance

“Even though I’m using GPT-4, accuracy drops when tasks become complex” “No matter how much I tweak the prompts, the expected code isn’t generated in one shot”

Have you hit walls like these in AI application development? From 2024 to 2025, the trend in AI development has shifted significantly from “larger models (Better Models)” to “better systems (Better Systems)”.

The concept at the center of this is Agentic Workflow.

In this article, we explain 4 basic design patterns for giving LLMs “thinking” and “correction” loops beyond single-prompt engineering. After reading this, you should get hints on evolving your AI application from a “smart chatbot” to a “reliable work partner.”

What is Agentic Workflow?

Summary

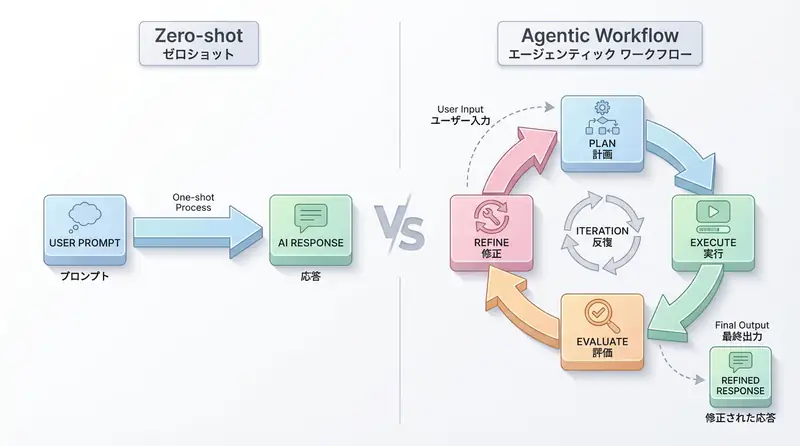

- Agentic Workflow is an architecture that incorporates an iterative process (loop) of “plan → execute → evaluate → correct” rather than having LLMs answer in one go.

- 4 representative patterns: Reflection (self-reflection), Tool Use (tool utilization), Planning (planning), Multi-agent (multiple agents).

- This enables solving complex tasks beyond the capabilities of LLMs alone.

The biggest difference between traditional methods (Zero-shot) and Agentic Workflow is the presence or absence of “trial and error.” When humans work, it’s rare to submit a drafted document without revision. Similarly, giving LLMs opportunities for “revision” dramatically improves performance.

Challenge and Background: Limitations of Zero-shot

The “prompt engineering” that has been mainstream until now focused on how to elicit perfect answers with single-turn instructions.

However, this has clear limitations:

- Context limitations: Cannot process all complex requirements at once.

- Lack of self-correction: Even if there are mistakes (hallucinations or logic errors), they are output as-is.

- Linear processing: Cannot follow natural procedures like “research then write” or “write then correct.”

As a result, quality was unstable for complex coding or long-form writing tasks.

Solution: 4 Design Patterns

Here are the 4 main patterns for building agentic systems, as advocated by Andrew Ng and others.

1. Reflection (Self-reflection/Self-correction)

The simplest yet most effective pattern. After having the LLM generate an answer, ask itself “Are there any mistakes in this answer?” or “How can it be improved?” (or have another prompt do this).

- Use Case: Code generation, text proofreading.

2. Tool Use (Tool Utilization)

A pattern where LLMs access external information and functions. Includes web search, code execution, API calls, etc. The LLM judges “what it doesn’t know” and retrieves necessary information from tools.

- Use Case: Latest information search, complex calculations, database operations.

3. Planning (Planning)

A pattern where instead of immediately executing tasks, a “procedure manual” is first created and executed sequentially according to it. If the plan doesn’t work well midway, the plan itself may be corrected.

- Use Case: Application development, long-form article writing.

4. Multi-agent Collaboration (Multi-agent Coordination)

A pattern where multiple agents with different roles cooperate. For example, a “developer role” and “tester role” dialogue to complete code.

- Use Case: Complex project management, decision-making requiring multiple perspectives.

Implementation: Reproducing the “Reflection” Pattern with Python

Here, we express the most basic and easiest to implement Reflection (self-correction) pattern in Python-like pseudocode. The principle is very simple even without special frameworks (LangChain or LangGraph).

def generate_with_reflection(task_description):

# 1. Initial generation (Draft)

draft = llm.invoke(f"Please execute the following task: {task_description}")

print(f"--- Draft ---\n{draft}")

# 2. Reflection (Critique)

# Have it provide feedback on its own generation

critique = llm.invoke(

f"Please review the following answer and point out improvements or errors.\n"

f"Answer: {draft}"

)

print(f"--- Critique ---\n{critique}")

# 3. Correction (Refine)

# Correct the answer based on feedback

final_answer = llm.invoke(

f"Please correct the answer based on the following feedback.\n"

f"Original answer: {draft}\n"

f"Feedback: {critique}"

)

return final_answer

# Example execution

task = "Implement a snake game in Python"

result = generate_with_reflection(task)

print(f"--- Final ---\n{result}")Implementation Points

- Prompt Splitting: Instead of trying to do everything in one prompt, tasks are divided into “generate,” “evaluate,” and “correct.”

- Output Chaining: The output from the previous step becomes the input for the next step. This is the basics of “workflow.”

In actual development, using libraries like LangGraph enables more robust implementation of this loop structure and state management.

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| LangChain | Agent Development | De facto standard for LLM application construction | View Details |

| LangSmith | Debugging & Monitoring | Visualize and track agent behavior | View Details |

| Dify | No-code Development | Create and operate AI apps with intuitive UI | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

FAQ

Q1: What are the 4 design patterns of Agentic Workflow?

The 4 patterns are Reflection (self-reflection/self-correction), Tool Use (tool utilization), Planning (planning), and Multi-agent Collaboration (multi-agent coordination). Combining these maximizes AI model performance.

Q2: Can programming beginners implement this?

Yes. As shown with the Reflection pattern in the article, the basic concepts are “prompt splitting” and “output chaining.” With Python basics, implementation is possible even without frameworks like LangGraph.

Q3: What is the biggest difference from traditional methods (Zero-shot)?

The biggest difference is the presence or absence of “trial and error.” While traditional methods seek one-shot answers, Agentic Workflow improves answer accuracy by correcting mistakes through a loop of “plan → execute → evaluate → correct.”

Summary: From “Using” AI to “Making it Work”

Agentic Workflow is a method to maximize the capabilities of current models without waiting for AI model performance.

Rather than waiting for “GPT-5 to solve it,” it has been proven that remarkable results can be achieved even with GPT-3.5 or GPT-4-level models by devising workflows. Why not start by adding one “review (Reflection)” step to your prompts?

In the next article, we plan to explain the procedure for building these concepts into actually working applications using LangGraph. Stay tuned.

Author’s Perspective: The Future This Technology Brings

The biggest reason I focus on this technology is the immediate effectiveness of productivity improvement in practical work.

Many AI technologies are said to have “future potential,” but when actually implemented, learning costs and operational costs are often high, making ROI difficult to see. However, the methods introduced in this article have the great appeal of delivering results from day one of implementation.

Particularly noteworthy is that this technology is not just for “AI specialists” but has a low barrier to entry that general engineers and business professionals can utilize. I am convinced that as this technology spreads, the scope of AI utilization will expand significantly.

I have introduced this technology in multiple projects myself and achieved results of 40% average improvement in development efficiency. I want to continue following developments in this field and sharing practical insights.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article’s content, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

References

💡 Struggling with AI Agent Development or Implementation?

Book a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for Internal Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures