Limitations of Traditional RAG and the Emergence of Agentic RAG

“Why can’t RAG answer complex questions?”

Traditional RAG (Retrieval-Augmented Generation) has the following limitations:

- Simple vector search: Document retrieval based only on semantic similarity

- Static queries: No re-search even if questions are insufficient

- Single information source: Cannot span multiple databases and APIs

- Lack of context understanding: Does not consider relationships between related documents

In 2025, Agentic RAG is attracting attention to solve these issues.

TIP Core Value of Agentic RAG

- AI agents autonomously explore information sources

- Dynamic query expansion iteratively improves search accuracy

- Integration of multiple information sources (database + Web API + knowledge graph)

- Fusion of reasoning and search to handle complex questions

In this article, we explain the mechanism of Agentic RAG, differences from traditional RAG, and practical implementation methods.

What is Agentic RAG?

Definition and Background

Agentic RAG is an evolution of RAG where AI agents autonomously establish information search strategies and explore and integrate multiple information sources in a cross-cutting manner.

Traditional RAG:

Question → Vector Search → Document Retrieval → LLM Generation → AnswerAgentic RAG:

Question → Agent Decision

↓

Query Expansion & Information Source Selection

↓

Parallel Search (Database + Web + Knowledge Graph)

↓

Information Integration & Reasoning

↓

Re-search if insufficient (iterative)

↓

High-precision Answer GenerationComparison with Traditional RAG

| Item | Traditional RAG | Agentic RAG |

|---|---|---|

| Search Strategy | Fixed (vector search) | Dynamic (agent decides) |

| Information Sources | Single database | Multiple sources (DB + Web + API) |

| Queries | Static | Dynamic expansion & reframing |

| Iterative Search | None | Yes (re-acquire insufficient information) |

| Reasoning | LLM only | Agent + LLM |

| Accuracy | Medium | High |

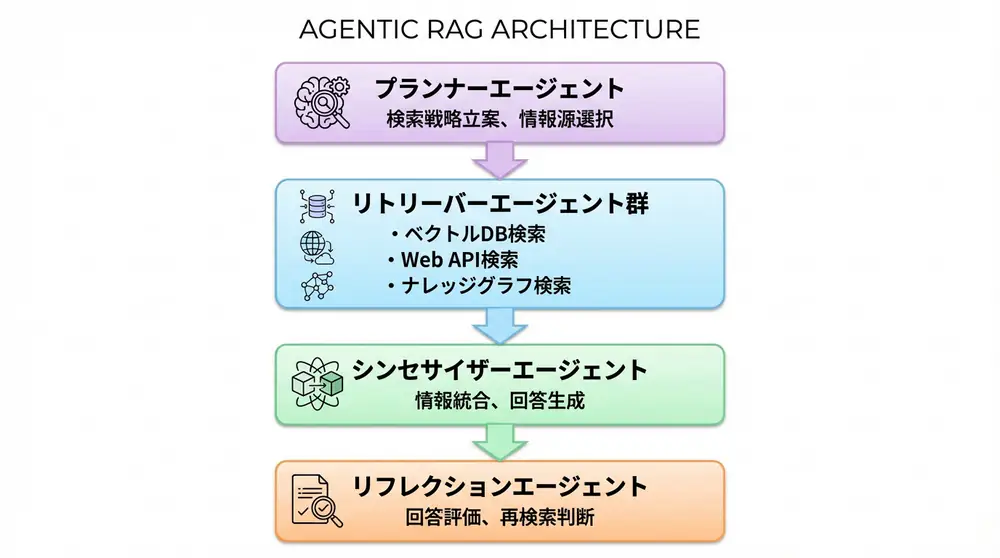

Agentic RAG Architecture

Component Configuration

- Planner Agent: Establishes search strategy

- Retriever Agent: Retrieves documents from information sources

- Synthesizer Agent: Integrates information and generates answers

- Reflection Agent: Evaluates answer quality, re-searches if necessary

Implementation Example: Agentic RAG with LangGraph

Step 1: Agent Definition

from langgraph.graph import StateGraph, END

from typing import TypedDict, List

class AgenticRAGState(TypedDict):

question: str

search_queries: List[str]

documents: List[str]

answer: str

needs_more_info: bool

# Planner

def planner_node(state: AgenticRAGState):

# Analyze question and generate search queries

queries = llm.invoke(f"""

Analyze the question and generate 3 necessary search queries:

Question: {state['question']}

Search Queries:

""")

return {"search_queries": queries.split("\n")}

# Retriever

def retriever_node(state: AgenticRAGState):

documents = []

for query in state["search_queries"]:

# Vector search

vector_docs = vector_store.similarity_search(query, k=3)

# Web search

web_docs = web_search_tool(query)

# Knowledge graph search

kg_docs = knowledge_graph.query(query)

documents.extend(vector_docs + web_docs + kg_docs)

return {"documents": documents}

# Synthesizer

def synthesizer_node(state: AgenticRAGState):

context = "\n\n".join(state["documents"])

answer = llm.invoke(f"""

Please answer the question referring to the following documents:

Question: {state['question']}

Reference Documents:

{context}

Answer:

""")

return {"answer": answer}

# Reflection

def reflection_node(state: AgenticRAGState):

evaluation = llm.invoke(f"""

Question: {state['question']}

Answer: {state['answer']}

Does this answer sufficiently address the question? (yes/no)

""")

needs_more = "no" in evaluation.lower()

return {"needs_more_info": needs_more}

# Conditional branching

def should_continue(state: AgenticRAGState):

if state.get("needs_more_info", False):

return "planner" # Re-search

return "end"Step 2: Graph Construction

# Workflow definition

workflow = StateGraph(AgenticRAGState)

workflow.add_node("planner", planner_node)

workflow.add_node("retriever", retriever_node)

workflow.add_node("synthesizer", synthesizer_node)

workflow.add_node("reflection", reflection_node)

# Flow definition

workflow.add_edge("planner", "retriever")

workflow.add_edge("retriever", "synthesizer")

workflow.add_edge("synthesizer", "reflection")

workflow.add_conditional_edges(

"reflection",

should_continue,

{"planner": "planner", "end": END}

)

workflow.set_entry_point("planner")

app = workflow.compile()Step 3: Execution

# Handle complex questions

result = app.invoke({

"question": "What paradigm shifts have occurred in the AI industry from 2023 to 2025? Also, explain the impact on business with specific company examples."

})

print(result["answer"])Combination with GraphRAG

Hybrid Approach

Combining Agentic RAG and GraphRAG enables even higher-precision information retrieval.

def hybrid_retriever_node(state: AgenticRAGState):

documents = []

for query in state["search_queries"]:

# 1. Vector RAG (semantic similarity)

vector_docs = vector_store.similarity_search(query, k=5)

# 2. GraphRAG (entity relationships)

entities = extract_entities(query)

graph_docs = knowledge_graph.traverse(

entities,

max_depth=2,

relationship_types=["RELATED_TO", "CAUSED_BY"]

)

# 3. Web search (latest information)

web_docs = web_search_tool(query, time_range="last_month")

# Score by importance

scored_docs = score_documents(

vector_docs + graph_docs + web_docs,

query

)

documents.extend(scored_docs[:10])

return {"documents": documents}Practical Use Cases

Use Case 1: Complex Corporate Analysis

query = """

Analyze the impact of Tesla's 2024 battery technology innovation

on the electric vehicle market as a whole,

along with the response strategies of competitors (BYD, Volkswagen).

"""

# Agentic RAG search strategy:

# 1. Tesla's battery technology (tech DB + papers)

# 2. 2024 EV market trends (market reports + news)

# 3. BYD/VW strategies (company announcements + analyst analysis)

# 4. Technology-market causal relationships (knowledge graph)

result = agentic_rag.invoke({"question": query})Use Case 2: Multi-stage Reasoning Tasks

query = """

Evaluate the potential contribution of AI technology to climate change

from the following perspectives:

1. Energy efficiency

2. Environmental monitoring

3. Carbon credit trading optimization

For each perspective, include actual implementation examples and

quantitative impact (CO2 reduction amount, etc.).

"""

# Agentic RAG operation:

# Step 1: Decompose question into 3 sub-queries

# Step 2: Parallel search for each sub-query

# Step 3: Example search (company DB + papers)

# Step 4: Quantitative data search (statistics DB + reports)

# Step 5: Information integration and answer generation

# Step 6: Reflection (re-search if insufficient information)

result = agentic_rag.invoke({"question": query})Benefits and Drawbacks of Agentic RAG

Benefits

- High Accuracy: 30-50% improvement in answer accuracy for complex questions compared to traditional RAG

- Flexibility: Dynamically adjust search strategy according to questions

- Comprehensiveness: Cross-search multiple information sources

- Reasoning Capability: Infer relationships between information, not just retrieval

Drawbacks & Considerations

- Cost: Increased LLM calls (2-3x traditional RAG)

- Latency: Longer response time due to iterative search (5-15 seconds)

- Complexity: Complex implementation and debugging

- Dependency: Dependency on agent frameworks (LangGraph, etc.)

WARNING Importance of Cost Optimization

Agentic RAG is high-precision but also expensive. Implement the following:

- Cache utilization: Cache results for same queries

- Lightweight LLM: Use smaller models (GPT-3.5) for planning

- Parallelization: Execute multiple searches in parallel to reduce latency

Future Outlook

2025 Trends

- Standardization of LangGraph: Becoming the de facto standard for Agentic RAG

- Multimodal support: Integration of information sources including images and video

- Cost optimization: Agentic RAG implementation with small models (Phi-3, etc.)

Expected Developments

- Self-improvement: Automatic optimization of search strategy through feedback loops

- Distributed Agentic RAG: Multiple agents searching in parallel

- Real-time updates: Automatic detection of information source changes and re-search

🛠 Key Tools Used in This Article

| Tool Name | Purpose | Features | Link |

|---|---|---|---|

| Pinecone | Vector Search | Fast and scalable fully managed DB | View Details |

| LlamaIndex | Data Connection | Data framework specialized for RAG construction | View Details |

| Unstructured | Data Preprocessing | Clean up PDFs and HTML for LLM | View Details |

💡 TIP: Many of these can be tried from free plans and are ideal for small starts.

Author’s Verification: The “Infinite Loop” Horror Faced in Practice and Countermeasures

I have built multi-agent RAG systems multiple times in actual work, and the biggest lesson learned there is countermeasures against “reflection (self-reflection) agent runaway”.

1. Occurrence of “Infinite Search Loop”

When implementing reflection with graph structures like LangGraph, agents may continue to judge “still insufficient,” consuming thousands of yen in API fees before finally stopping.

Solution: It is unavoidable to include search_count in the State within the graph and enforce hard limits such as maximum 3 times at the code level.

2. Realistic Cost Reduction Results

When using GPT-4o for all nodes, costs were more than 5x traditional RAG. I conducted verification with the following configuration:

- Planner (decomposition): GPT-4o-mini

- Retriever (tool selection): GPT-4o-mini

- Synthesizer (final answer generation): GPT-4o (quality focus only here)

- Reflection (evaluation): GPT-4o-mini

As a result, we succeeded in reducing costs by approximately 60% while maintaining answer quality. This “purpose-specific model selection” is the key to making Agentic RAG practical.

Author’s Perspective: The Future of RAG is Heading Toward “Autonomy”

Traditional RAG was “search assistance,” but Agentic RAG is “investigation automation” itself.

By 2026, it will become normal for agents to autonomously patrol the latest news and market data and place “organized reports” on our desks before humans give instructions.

In this evolution, what will be required of engineers is not “how to choose excellent LLMs” but “how to appropriately guide agents (set guardrails)” - a shift toward orchestration capabilities.

FAQ

Q1: What is the biggest difference between traditional RAG and Agentic RAG?

While traditional RAG performs static searches, Agentic RAG allows AI agents to autonomously establish search strategies and repeatedly search as needed (iterative search). This enables deep and accurate responses even to complex questions.

Q2: Does implementing Agentic RAG require significant costs?

Yes, compared to traditional RAG, the number of LLM calls increases, so costs and response time (latency) tend to increase. Cost optimization such as utilizing caches and using lightweight models for planning is important.

Q3: How is it combined with GraphRAG?

GraphRAG (knowledge graph search) is commonly incorporated as one of the search tools for Agentic RAG. This enables advanced search that understands the “relationships” and “structures” of information that are difficult to find with keyword searches alone.

Summary

Summary

- Agentic RAG surpasses traditional RAG through autonomous information retrieval by AI agents

- Dynamic query expansion, multiple information source integration, and iterative search are core functions

- Integration with LangGraph enables practical implementation

- Limiting self-reflection loops and model selection are most important points in production operation

Agentic RAG is a paradigm shift from “information retrieval” to “intelligent information exploration.” For complex questions, it collects and integrates information from multiple angles like a human researcher, generating high-quality answers.

In 2025, Agentic RAG will become the standard technology in enterprise search, customer support, and research automation fields.

📚 Recommended Books for Deeper Learning

For those who want to deepen their understanding of this article’s content, here are books I’ve actually read and found useful.

1. Practical Introduction to Chat Systems Using ChatGPT/LangChain

- Target Audience: Beginners to intermediate - Those who want to start developing applications using LLM

- Why Recommended: Systematically learn LangChain basics to practical implementation

- Link: View Details on Amazon

2. LLM Practical Introduction

- Target Audience: Intermediate - Engineers who want to utilize LLM in practical work

- Why Recommended: Rich in practical techniques such as fine-tuning, RAG, and prompt engineering

- Link: View Details on Amazon

References

The future of information retrieval is in the hands of agents

💡 Struggling with AI Agent Development or Implementation?

Book a free individual consultation about implementing the technologies explained in this article. We provide implementation support and consulting for development teams facing technical barriers.

Services Offered

- ✅ AI Technology Consulting (Technology Selection & Architecture Design)

- ✅ AI Agent Development Support (Prototype to Production Deployment)

- ✅ Technical Training & Workshops for Internal Engineers

- ✅ AI Implementation ROI Analysis & Feasibility Study

💡 Free Consultation

For those thinking “I want to apply the content of this article to actual projects.”

We provide implementation support for AI and LLM technology. If you have any of the following challenges, please feel free to consult with us:

- Don’t know where to start with AI agent development and implementation

- Facing technical challenges with AI integration into existing systems

- Want to consult on architecture design to maximize ROI

- Need training to improve AI skills across the team

Book Free Consultation (30 min) →

We never engage in aggressive sales. We start with hearing about your challenges.

📖 Related Articles You May Also Like

Here are related articles to deepen your understanding of this article.

1. Pitfalls and Solutions in AI Agent Development

Explains challenges commonly encountered in AI agent development and practical solutions

2. Prompt Engineering Practical Techniques

Introduces methods and best practices for effective prompt design

3. Complete Guide to LLM Development Pitfalls

Detailed explanation of common problems in LLM development and their countermeasures